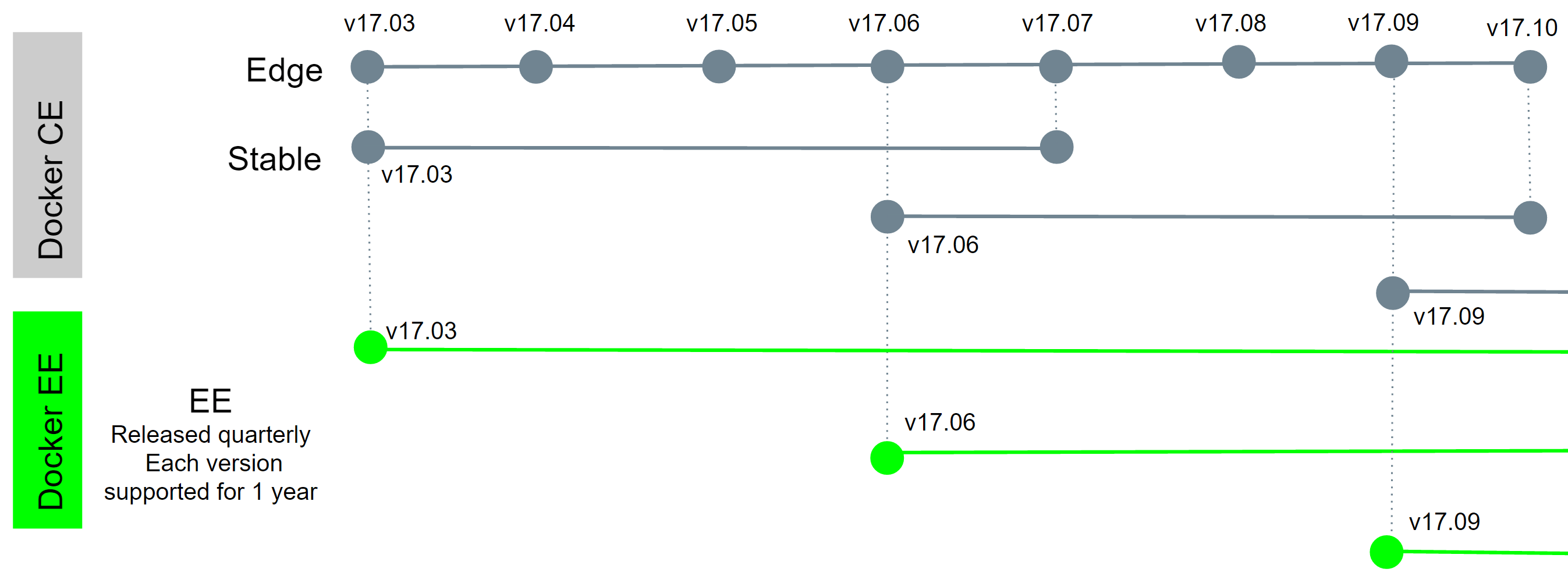

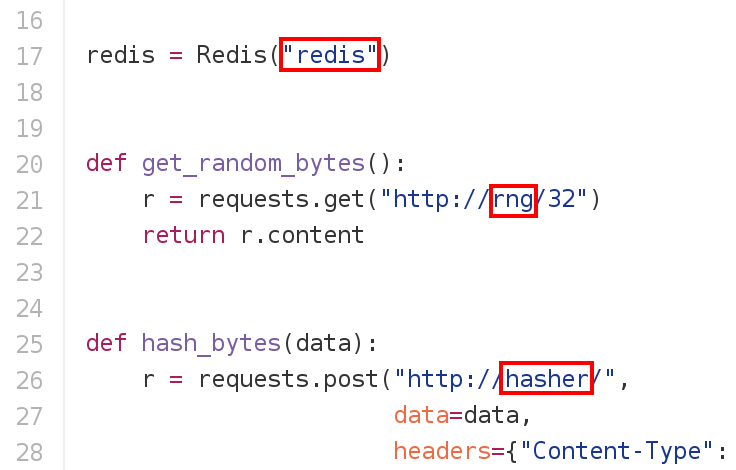

class: title .small[ LISA17 T9 Build, Ship, and Run Microservices on a Docker Swarm Cluster .small[.small[ **Be kind to the WiFi!** *Use the 5G network* <br/> *Don't use your hotspot* <br/> *Don't stream videos from YouTube, Netflix, etc. <br/>(if you're bored, watch local content instead)* <!-- Also: share the power outlets <br/> *(with limited power comes limited responsibility?)* <br/> *(or something?)* --> Thank you! ] ] ] .debug[(inline)] --- ## Intros - Hello! We are AJ ([@s0ulshake](https://twitter.com/s0ulshake), Travis CI) & Jérôme ([@jpetazzo](https://twitter.com/jpetazzo), Docker Inc.) -- - This is our collective Docker knowledge:  .debug[(inline)] --- ## Logistics - The tutorial will run from 1:30pm to 5:00pm - This will be fast-paced, but DON'T PANIC! - There will be a coffee break at 3:00pm <br/> (please remind us if we forget about it!) - Feel free to interrupt for questions at any time - All the content is publicly available (slides, code samples, scripts) One URL to remember: http://container.training - Live feedback, questions, help on [Gitter](https://gitter.im/jpetazzo/workshop-20171031-sanfrancisco) .debug[(inline)] --- ## A brief introduction - This was initially written to support in-person, instructor-led workshops and tutorials - You can also follow along on your own, at your own pace - We included as much information as possible in these slides - We recommend having a mentor to help you ... - ... Or be comfortable spending some time reading the Docker [documentation](https://docs.docker.com/) ... - ... And looking for answers in the [Docker forums](forums.docker.com), [StackOverflow](http://stackoverflow.com/questions/tagged/docker), and other outlets .debug[[swarm/intro.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/intro.md)] --- class: self-paced ## Hands on, you shall practice - Nobody ever became a Jedi by spending their lives reading Wookiepedia - Likewise, it will take more than merely *reading* these slides to make you an expert - These slides include *tons* of exercises - They assume that you have access to a cluster of Docker nodes - If you are attending a workshop or tutorial: <br/>you will be given specific instructions to access your cluster - If you are doing this on your own: <br/>you can use [Play-With-Docker](http://www.play-with-docker.com/) and read [these instructions](https://github.com/jpetazzo/orchestration-workshop#using-play-with-docker) for extra details .debug[[swarm/intro.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/intro.md)] --- name: toc-chapter-1 ## Chapter 1 - [Pre-requirements](#toc-pre-requirements) - [VM environment](#toc-vm-environment) - [Our sample application](#toc-our-sample-application) - [Running the application](#toc-running-the-application) - [Identifying bottlenecks](#toc-identifying-bottlenecks) - [SwarmKit](#toc-swarmkit) - [Creating our first Swarm](#toc-creating-our-first-swarm) .debug[(auto-generated TOC)] --- name: toc-chapter-2 ## Chapter 2 - [Running our first Swarm service](#toc-running-our-first-swarm-service) - [Deploying a local registry](#toc-deploying-a-local-registry) - [Overlay networks](#toc-overlay-networks) - [Global scheduling](#toc-global-scheduling) - [Integration with Compose](#toc-integration-with-compose) - [Updating services](#toc-updating-services) .debug[(auto-generated TOC)] --- name: toc-chapter-3 ## Chapter 3 - [Secrets management and encryption at rest](#toc-secrets-management-and-encryption-at-rest) - [Least privilege model](#toc-least-privilege-model) - [Centralized logging](#toc-centralized-logging) - [Setting up ELK to store container logs](#toc-setting-up-elk-to-store-container-logs) - [Metrics collection](#toc-metrics-collection) - [What's next?](#toc-whats-next) .debug[(inline)] --- name: toc-pre-requirements class: title Pre-requirements .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Pre-requirements - Computer with internet connection and a web browser - For instructor-led workshops: an SSH client to connect to remote machines - on Linux, OS X, FreeBSD... you are probably all set - on Windows, get [putty](http://www.putty.org/), Microsoft [Win32 OpenSSH](https://github.com/PowerShell/Win32-OpenSSH/wiki/Install-Win32-OpenSSH), [Git BASH](https://git-for-windows.github.io/), or [MobaXterm](http://mobaxterm.mobatek.net/) - For self-paced learning: SSH is not necessary if you use [Play-With-Docker](http://www.play-with-docker.com/) - Some Docker knowledge (but that's OK if you're not a Docker expert!) .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- class: in-person, extra-details ## Nice-to-haves - [Mosh](https://mosh.org/) instead of SSH, if your internet connection tends to lose packets <br/>(available with `(apt|yum|brew) install mosh`; then connect with `mosh user@host`) - [GitHub](https://github.com/join) account <br/>(if you want to fork the repo) - [Slack](https://community.docker.com/registrations/groups/4316) account <br/>(to join the conversation after the workshop) - [Docker Hub](https://hub.docker.com) account <br/>(it's one way to distribute images on your cluster) .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- class: extra-details ## Extra details - This slide should have a little magnifying glass in the top left corner (If it doesn't, it's because CSS is hard — Jérôme is only a backend person, alas) - Slides with that magnifying glass indicate slides providing extra details - Feel free to skip them if you're in a hurry! .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- ## Hands-on sections - The whole workshop is hands-on - We will see Docker in action - You are invited to reproduce all the demos - All hands-on sections are clearly identified, like the gray rectangle below .exercise[ - This is the stuff you're supposed to do! - Go to [container.training](http://container.training/) to view these slides - Join the chat room on [Gitter](https://gitter.im/jpetazzo/workshop-20171031-sanfrancisco) ] .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- name: toc-vm-environment class: title VM environment .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- class: in-person # VM environment - To follow along, you need a cluster of five Docker Engines - If you are doing this with an instructor, see next slide - If you are doing (or re-doing) this on your own, you can: - create your own cluster (local or cloud VMs) with Docker Machine ([instructions](https://github.com/jpetazzo/orchestration-workshop/tree/master/prepare-machine)) - use [Play-With-Docker](http://play-with-docker.com) ([instructions](https://github.com/jpetazzo/orchestration-workshop#using-play-with-docker)) - create a bunch of clusters for you and your friends ([instructions](https://github.com/jpetazzo/orchestration-workshop/tree/master/prepare-vms)) .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- class: pic, in-person  .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- class: in-person ## You get five VMs - Each person gets 5 private VMs (not shared with anybody else) - They'll remain up until the day after the tutorial - You should have a little card with login+password+IP addresses - You can automatically SSH from one VM to another .exercise[ <!-- ```bash for N in $(seq 1 5); do ssh -o StrictHostKeyChecking=no node$N true done ``` --> - Log into the first VM (`node1`) with SSH or MOSH - Check that you can SSH (without password) to `node2`: ```bash ssh node2 ``` - Type `exit` or `^D` to come back to node1 <!-- ```bash exit``` --> ] .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- ## If doing or re-doing the workshop on your own ... - Use [Play-With-Docker](http://www.play-with-docker.com/)! - Main differences: - you don't need to SSH to the machines <br/>(just click on the node that you want to control in the left tab bar) - Play-With-Docker automagically detects exposed ports <br/>(and displays them as little badges with port numbers, above the terminal) - You can access HTTP services by clicking on the port numbers - exposing TCP services requires something like [ngrok](https://ngrok.com/) or [supergrok](https://github.com/jpetazzo/orchestration-workshop#using-play-with-docker) <!-- - If you use VMs deployed with Docker Machine: - you won't have pre-authorized SSH keys to bounce across machines - you won't have host aliases --> .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- class: self-paced ## Using Play-With-Docker - Open a new browser tab to [www.play-with-docker.com](http://www.play-with-docker.com/) - Confirm that you're not a robot - Click on "ADD NEW INSTANCE": congratulations, you have your first Docker node! - When you will need more nodes, just click on "ADD NEW INSTANCE" again - Note the countdown in the corner; when it expires, your instances are destroyed - If you give your URL to somebody else, they can access your nodes too <br/> (You can use that for pair programming, or to get help from a mentor) - Loving it? Not loving it? Tell it to the wonderful authors, [@marcosnils](https://twitter.com/marcosnils) & [@xetorthio](https://twitter.com/xetorthio)! .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- ## We will (mostly) interact with node1 only - Unless instructed, **all commands must be run from the first VM, `node1`** - We will only checkout/copy the code on `node1` - When we will use the other nodes, we will do it mostly through the Docker API - We will log into other nodes only for initial setup and a few "out of band" operations <br/>(checking internal logs, debugging...) .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- ## Terminals Once in a while, the instructions will say: <br/>"Open a new terminal." There are multiple ways to do this: - create a new window or tab on your machine, and SSH into the VM; - use screen or tmux on the VM and open a new window from there. You are welcome to use the method that you feel the most comfortable with. .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- ## Tmux cheatsheet - Ctrl-b c → creates a new window - Ctrl-b n → go to next window - Ctrl-b p → go to previous window - Ctrl-b " → split window top/bottom - Ctrl-b % → split window left/right - Ctrl-b Alt-1 → rearrange windows in columns - Ctrl-b Alt-2 → rearrange windows in rows - Ctrl-b arrows → navigate to other windows - Ctrl-b d → detach session - tmux attach → reattach to session .debug[[swarm/prereqs.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/prereqs.md)] --- ## Brand new versions! - Engine 17.10 - Compose 1.16 - Machine 0.12 .exercise[ - Check all installed versions: ```bash docker version docker-compose -v docker-machine -v ``` ] .debug[[swarm/versions.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/versions.md)] --- ## Wait, what, 17.10 ?!? -- - Docker 1.13 = Docker 17.03 (year.month, like Ubuntu) - Every month, there is a new "edge" release (with new features) - Every quarter, there is a new "stable" release - Docker CE releases are maintained 4+ months - Docker EE releases are maintained 12+ months - For more details, check the [Docker EE announcement blog post](https://blog.docker.com/2017/03/docker-enterprise-edition/) .debug[[swarm/versions.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/versions.md)] --- class: extra-details ## Docker CE vs Docker EE - Docker EE: - $$$ - certification for select distros, clouds, and plugins - advanced management features (fine-grained access control, security scanning...) - Docker CE: - free - available through Docker Mac, Docker Windows, and major Linux distros - perfect for individuals and small organizations .debug[[swarm/versions.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/versions.md)] --- class: extra-details ## Why? - More readable for enterprise users (i.e. the very nice folks who are kind enough to pay us big $$$ for our stuff) - No impact for the community (beyond CE/EE suffix and version numbering change) - Both trains leverage the same open source components (containerd, libcontainer, SwarmKit...) - More predictible release schedule (see next slide) .debug[[swarm/versions.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/versions.md)] --- class: pic  .debug[[swarm/versions.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/versions.md)] --- ## What was added when? |||| | ---- | ----- | --- | | 2015 | 1.9 | Overlay (multi-host) networking, network/IPAM plugins | 2016 | 1.10 | Embedded dynamic DNS | 2016 | 1.11 | DNS round robin load balancing | 2016 | 1.12 | Swarm mode, routing mesh, encrypted networking, healthchecks | 2017 | 1.13 | Stacks, attachable overlays, image squash and compress | 2017 | 1.13 | Windows Server 2016 Swarm mode | 2017 | 17.03 | Secrets | 2017 | 17.04 | Update rollback, placement preferences (soft constraints) | 2017 | 17.05 | Multi-stage image builds, service logs | 2017 | 17.06 | Swarm configs, node/service events | 2017 | 17.06 | Windows Server 2016 Swarm overlay networks, secrets .debug[[swarm/versions.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/versions.md)] --- class: title All right! <br/> We're all set. <br/> Let's do this. .debug[(inline)] --- name: toc-our-sample-application class: title Our sample application .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Our sample application - Visit the GitHub repository with all the materials of this workshop: <br/>https://github.com/jpetazzo/orchestration-workshop - The application is in the [dockercoins]( https://github.com/jpetazzo/orchestration-workshop/tree/master/dockercoins) subdirectory - Let's look at the general layout of the source code: there is a Compose file [docker-compose.yml]( https://github.com/jpetazzo/orchestration-workshop/blob/master/dockercoins/docker-compose.yml) ... ... and 4 other services, each in its own directory: - `rng` = web service generating random bytes - `hasher` = web service computing hash of POSTed data - `worker` = background process using `rng` and `hasher` - `webui` = web interface to watch progress .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- class: extra-details ## Compose file format version *Particularly relevant if you have used Compose before...* - Compose 1.6 introduced support for a new Compose file format (aka "v2") - Services are no longer at the top level, but under a `services` section - There has to be a `version` key at the top level, with value `"2"` (as a string, not an integer) - Containers are placed on a dedicated network, making links unnecessary - There are other minor differences, but upgrade is easy and straightforward .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Links, naming, and service discovery - Containers can have network aliases (resolvable through DNS) - Compose file version 2+ makes each container reachable through its service name - Compose file version 1 did require "links" sections - Our code can connect to services using their short name (instead of e.g. IP address or FQDN) - Network aliases are automatically namespaced (i.e. you can have multiple apps declaring and using a service named `database`) .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Example in `worker/worker.py`  .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## What's this application? .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- class: pic  (DockerCoins 2016 logo courtesy of [@XtlCnslt](https://twitter.com/xtlcnslt) and [@ndeloof](https://twitter.com/ndeloof). Thanks!) .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## What's this application? - It is a DockerCoin miner! 💰🐳📦🚢 -- - No, you can't buy coffee with DockerCoins -- - How DockerCoins works: - `worker` asks to `rng` to generate a few random bytes - `worker` feeds these bytes into `hasher` - and repeat forever! - every second, `worker` updates `redis` to indicate how many loops were done - `webui` queries `redis`, and computes and exposes "hashing speed" in your browser .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Getting the application source code - We will clone the GitHub repository - The repository also contains scripts and tools that we will use through the workshop .exercise[ <!-- ```bash if [ -d orchestration-workshop ]; then mv orchestration-workshop orchestration-workshop.$$ fi ``` --> - Clone the repository on `node1`: ```bash git clone git://github.com/jpetazzo/orchestration-workshop ``` ] (You can also fork the repository on GitHub and clone your fork if you prefer that.) .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- name: toc-running-the-application class: title Running the application .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Running the application Without further ado, let's start our application. .exercise[ - Go to the `dockercoins` directory, in the cloned repo: ```bash cd ~/orchestration-workshop/dockercoins ``` - Use Compose to build and run all containers: ```bash docker-compose up ``` <!-- ```wait units of work done``` ```keys ^C``` --> ] Compose tells Docker to build all container images (pulling the corresponding base images), then starts all containers, and displays aggregated logs. .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Lots of logs - The application continuously generates logs - We can see the `worker` service making requests to `rng` and `hasher` - Let's put that in the background .exercise[ - Stop the application by hitting `^C` ] - `^C` stops all containers by sending them the `TERM` signal - Some containers exit immediately, others take longer <br/>(because they don't handle `SIGTERM` and end up being killed after a 10s timeout) .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Restarting in the background - Many flags and commands of Compose are modeled after those of `docker` .exercise[ - Start the app in the background with the `-d` option: ```bash docker-compose up -d ``` - Check that our app is running with the `ps` command: ```bash docker-compose ps ``` ] `docker-compose ps` also shows the ports exposed by the application. .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- class: extra-details ## Viewing logs - The `docker-compose logs` command works like `docker logs` .exercise[ - View all logs since container creation and exit when done: ```bash docker-compose logs ``` - Stream container logs, starting at the last 10 lines for each container: ```bash docker-compose logs --tail 10 --follow ``` <!-- ```wait units of work done``` ```keys ^C``` --> ] Tip: use `^S` and `^Q` to pause/resume log output. .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- class: extra-details ## Upgrading from Compose 1.6 .warning[The `logs` command has changed between Compose 1.6 and 1.7!] - Up to 1.6 - `docker-compose logs` is the equivalent of `logs --follow` - `docker-compose logs` must be restarted if containers are added - Since 1.7 - `--follow` must be specified explicitly - new containers are automatically picked up by `docker-compose logs` .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Connecting to the web UI - The `webui` container exposes a web dashboard; let's view it .exercise[ - With a web browser, connect to `node1` on port 8000 - Remember: the `nodeX` aliases are valid only on the nodes themselves - In your browser, you need to enter the IP address of your node <!-- ```open http://node1:8000``` --> ] You should see a speed of approximately 4 hashes/second. More precisely: 4 hashes/second, with regular dips down to zero. <br/>This is because Jérôme is incapable of writing good frontend code. <br/>Don't ask. Seriously, don't ask. This is embarrassing. .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- class: extra-details ## Why does the speed seem irregular? - The app actually has a constant, steady speed: 3.33 hashes/second <br/> (which corresponds to 1 hash every 0.3 seconds, for *reasons*) - The worker doesn't update the counter after every loop, but up to once per second - The speed is computed by the browser, checking the counter about once per second - Between two consecutive updates, the counter will increase either by 4, or by 0 - The perceived speed will therefore be 4 - 4 - 4 - 0 - 4 - 4 - etc. *We told you to not ask!!!* .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Scaling up the application - Our goal is to make that performance graph go up (without changing a line of code!) -- - Before trying to scale the application, we'll figure out if we need more resources (CPU, RAM...) - For that, we will use good old UNIX tools on our Docker node .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Looking at resource usage - Let's look at CPU, memory, and I/O usage .exercise[ - run `top` to see CPU and memory usage (you should see idle cycles) <!-- ```bash top``` ```wait Tasks``` ```keys ^C``` --> - run `vmstat 1` to see I/O usage (si/so/bi/bo) <br/>(the 4 numbers should be almost zero, except `bo` for logging) <!-- ```bash vmstat 1``` ```wait memory``` ```keys ^C``` --> ] We have available resources. - Why? - How can we use them? .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Scaling workers on a single node - Docker Compose supports scaling - Let's scale `worker` and see what happens! .exercise[ - Start one more `worker` container: ```bash docker-compose scale worker=2 ``` - Look at the performance graph (it should show a x2 improvement) - Look at the aggregated logs of our containers (`worker_2` should show up) - Look at the impact on CPU load with e.g. top (it should be negligible) ] .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Adding more workers - Great, let's add more workers and call it a day, then! .exercise[ - Start eight more `worker` containers: ```bash docker-compose scale worker=10 ``` - Look at the performance graph: does it show a x10 improvement? - Look at the aggregated logs of our containers - Look at the impact on CPU load and memory usage ] .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- name: toc-identifying-bottlenecks class: title Identifying bottlenecks .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Identifying bottlenecks - You should have seen a 3x speed bump (not 10x) - Adding workers didn't result in linear improvement - *Something else* is slowing us down -- - ... But what? -- - The code doesn't have instrumentation - Let's use state-of-the-art HTTP performance analysis! <br/>(i.e. good old tools like `ab`, `httping`...) .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Accessing internal services - `rng` and `hasher` are exposed on ports 8001 and 8002 - This is declared in the Compose file: ```yaml ... rng: build: rng ports: - "8001:80" hasher: build: hasher ports: - "8002:80" ... ``` .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Measuring latency under load We will use `httping`. .exercise[ - Check the latency of `rng`: ```bash httping -c 10 localhost:8001 ``` - Check the latency of `hasher`: ```bash httping -c 10 localhost:8002 ``` ] `rng` has a much higher latency than `hasher`. .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Let's draw hasty conclusions - The bottleneck seems to be `rng` - *What if* we don't have enough entropy and can't generate enough random numbers? - We need to scale out the `rng` service on multiple machines! Note: this is a fiction! We have enough entropy. But we need a pretext to scale out. (In fact, the code of `rng` uses `/dev/urandom`, which never runs out of entropy... <br/> ...and is [just as good as `/dev/random`](http://www.slideshare.net/PacSecJP/filippo-plain-simple-reality-of-entropy).) .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- ## Clean up - Before moving on, let's remove those containers .exercise[ - Tell Compose to remove everything: ```bash docker-compose down ``` ] .debug[[common/sampleapp.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/common/sampleapp.md)] --- name: toc-swarmkit class: title SwarmKit .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # SwarmKit - [SwarmKit](https://github.com/docker/swarmkit) is an open source toolkit to build multi-node systems - It is a reusable library, like libcontainer, libnetwork, vpnkit ... - It is a plumbing part of the Docker ecosystem -- .footnote[🐳 Did you know that кит means "whale" in Russian?] .debug[[swarm/swarmkit.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/swarmkit.md)] --- ## SwarmKit features - Highly-available, distributed store based on [Raft]( https://en.wikipedia.org/wiki/Raft_%28computer_science%29) <br/>(avoids depending on an external store: easier to deploy; higher performance) - Dynamic reconfiguration of Raft without interrupting cluster operations - *Services* managed with a *declarative API* <br/>(implementing *desired state* and *reconciliation loop*) - Integration with overlay networks and load balancing - Strong emphasis on security: - automatic TLS keying and signing; automatic cert rotation - full encryption of the data plane; automatic key rotation - least privilege architecture (single-node compromise ≠ cluster compromise) - on-disk encryption with optional passphrase .debug[[swarm/swarmkit.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/swarmkit.md)] --- class: extra-details ## Where is the key/value store? - Many orchestration systems use a key/value store backed by a consensus algorithm <br/> (k8s→etcd→Raft, mesos→zookeeper→ZAB, etc.) - SwarmKit implements the Raft algorithm directly <br/> (Nomad is similar; thanks [@cbednarski](https://twitter.com/@cbednarski), [@diptanu](https://twitter.com/diptanu) and others for point it out!) - Analogy courtesy of [@aluzzardi](https://twitter.com/aluzzardi): *It's like B-Trees and RDBMS. They are different layers, often associated. But you don't need to bring up a full SQL server when all you need is to index some data.* - As a result, the orchestrator has direct access to the data <br/> (the main copy of the data is stored in the orchestrator's memory) - Simpler, easier to deploy and operate; also faster .debug[[swarm/swarmkit.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/swarmkit.md)] --- ## SwarmKit concepts (1/2) - A *cluster* will be at least one *node* (preferably more) - A *node* can be a *manager* or a *worker* - A *manager* actively takes part in the Raft consensus, and keeps the Raft log - You can talk to a *manager* using the SwarmKit API - One *manager* is elected as the *leader*; other managers merely forward requests to it - The *workers* get their instructions from the *managers* - Both *workers* and *managers* can run containers .debug[[swarm/swarmkit.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/swarmkit.md)] --- ## Illustration  .debug[[swarm/swarmkit.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/swarmkit.md)] --- ## SwarmKit concepts (2/2) - The *managers* expose the SwarmKit API - Using the API, you can indicate that you want to run a *service* - A *service* is specified by its *desired state*: which image, how many instances... - The *leader* uses different subsystems to break down services into *tasks*: <br/>orchestrator, scheduler, allocator, dispatcher - A *task* corresponds to a specific container, assigned to a specific *node* - *Nodes* know which *tasks* should be running, and will start or stop containers accordingly (through the Docker Engine API) You can refer to the [NOMENCLATURE](https://github.com/docker/swarmkit/blob/master/design/nomenclature.md) in the SwarmKit repo for more details. .debug[[swarm/swarmkit.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/swarmkit.md)] --- ## Swarm Mode - Since version 1.12, Docker Engine embeds SwarmKit - All the SwarmKit features are "asleep" until you enable "Swarm Mode" - Examples of Swarm Mode commands: - `docker swarm` (enable Swarm mode; join a Swarm; adjust cluster parameters) - `docker node` (view nodes; promote/demote managers; manage nodes) - `docker service` (create and manage services) ??? - The Docker API exposes the same concepts - The SwarmKit API is also exposed (on a separate socket) .debug[[swarm/swarmkit.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/swarmkit.md)] --- ## You need to enable Swarm mode to use the new stuff - By default, all this new code is inactive - Swarm Mode can be enabled, "unlocking" SwarmKit functions <br/>(services, out-of-the-box overlay networks, etc.) .exercise[ - Try a Swarm-specific command: ```bash docker node ls ``` <!-- Ignore errors: ```wait ``` --> ] -- You will get an error message: ``` Error response from daemon: This node is not a swarm manager. [...] ``` .debug[[swarm/swarmkit.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/swarmkit.md)] --- name: toc-creating-our-first-swarm class: title Creating our first Swarm .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Creating our first Swarm - The cluster is initialized with `docker swarm init` - This should be executed on a first, seed node - .warning[DO NOT execute `docker swarm init` on multiple nodes!] You would have multiple disjoint clusters. .exercise[ - Create our cluster from node1: ```bash docker swarm init ``` ] -- class: advertise-addr If Docker tells you that it `could not choose an IP address to advertise`, see next slide! .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: advertise-addr ## IP address to advertise - When running in Swarm mode, each node *advertises* its address to the others <br/> (i.e. it tells them *"you can contact me on 10.1.2.3:2377"*) - If the node has only one IP address (other than 127.0.0.1), it is used automatically - If the node has multiple IP addresses, you **must** specify which one to use <br/> (Docker refuses to pick one randomly) - You can specify an IP address or an interface name <br/>(in the latter case, Docker will read the IP address of the interface and use it) - You can also specify a port number <br/>(otherwise, the default port 2377 will be used) .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: advertise-addr ## Which IP address should be advertised? - If your nodes have only one IP address, it's safe to let autodetection do the job .small[(Except if your instances have different private and public addresses, e.g. on EC2, and you are building a Swarm involving nodes inside and outside the private network: then you should advertise the public address.)] - If your nodes have multiple IP addresses, pick an address which is reachable *by every other node* of the Swarm - If you are using [play-with-docker](http://play-with-docker.com/), use the IP address shown next to the node name .small[(This is the address of your node on your private internal overlay network. The other address that you might see is the address of your node on the `docker_gwbridge` network, which is used for outbound traffic.)] Examples: ```bash docker swarm init --advertise-addr 10.0.9.2 docker swarm init --advertise-addr eth0:7777 ``` .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: extra-details ## Using a separate interface for the data path - You can use different interfaces (or IP addresses) for control and data - You set the _control plane path_ with `--advertise-addr` (This will be used for SwarmKit manager/worker communication, leader election, etc.) - You set the _data plane path_ with `--data-path-addr` (This will be used for traffic between containers) - Both flags can accept either an IP address, or an interface name (When specifying an interface name, Docker will use its first IP address) .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- ## Token generation - In the output of `docker swarm init`, we have a message confirming that our node is now the (single) manager: ``` Swarm initialized: current node (8jud...) is now a manager. ``` - Docker generated two security tokens (like passphrases or passwords) for our cluster - The CLI shows us the command to use on other nodes to add them to the cluster using the "worker" security token: ``` To add a worker to this swarm, run the following command: docker swarm join \ --token SWMTKN-1-59fl4ak4nqjmao1ofttrc4eprhrola2l87... \ 172.31.4.182:2377 ``` .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: extra-details ## Checking that Swarm mode is enabled .exercise[ - Run the traditional `docker info` command: ```bash docker info ``` ] The output should include: ``` Swarm: active NodeID: 8jud7o8dax3zxbags3f8yox4b Is Manager: true ClusterID: 2vcw2oa9rjps3a24m91xhvv0c ... ``` .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- ## Running our first Swarm mode command - Let's retry the exact same command as earlier .exercise[ - List the nodes (well, the only node) of our cluster: ```bash docker node ls ``` ] The output should look like the following: ``` ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS 8jud...ox4b * node1 Ready Active Leader ``` .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- ## Adding nodes to the Swarm - A cluster with one node is not a lot of fun - Let's add `node2`! - We need the token that was shown earlier -- - You wrote it down, right? -- - Don't panic, we can easily see it again 😏 .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- ## Adding nodes to the Swarm .exercise[ - Show the token again: ```bash docker swarm join-token worker ``` - Log into `node2`: ```bash ssh node2 ``` - Copy-paste the `docker swarm join ...` command <br/>(that was displayed just before) <!-- ```copypaste docker swarm join --token SWMTKN.*?:2377``` --> ] .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: extra-details ## Check that the node was added correctly - Stay on `node2` for now! .exercise[ - We can still use `docker info` to verify that the node is part of the Swarm: ```bash docker info | grep ^Swarm ``` ] - However, Swarm commands will not work; try, for instance: ```bash docker node ls ``` ```wait``` - This is because the node that we added is currently a *worker* - Only *managers* can accept Swarm-specific commands .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- ## View our two-node cluster - Let's go back to `node1` and see what our cluster looks like .exercise[ - Switch back to `node1`: ```keys ^D ``` - View the cluster from `node1`, which is a manager: ```bash docker node ls ``` ] The output should be similar to the following: ``` ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS 8jud...ox4b * node1 Ready Active Leader ehb0...4fvx node2 Ready Active ``` .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: under-the-hood ## Under the hood: docker swarm init When we do `docker swarm init`: - a keypair is created for the root CA of our Swarm - a keypair is created for the first node - a certificate is issued for this node - the join tokens are created .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: under-the-hood ## Under the hood: join tokens There is one token to *join as a worker*, and another to *join as a manager*. The join tokens have two parts: - a secret key (preventing unauthorized nodes from joining) - a fingerprint of the root CA certificate (preventing MITM attacks) If a token is compromised, it can be rotated instantly with: ``` docker swarm join-token --rotate <worker|manager> ``` .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: under-the-hood ## Under the hood: docker swarm join When a node joins the Swarm: - it is issued its own keypair, signed by the root CA - if the node is a manager: - it joins the Raft consensus - it connects to the current leader - it accepts connections from worker nodes - if the node is a worker: - it connects to one of the managers (leader or follower) .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: under-the-hood ## Under the hood: cluster communication - The *control plane* is encrypted with AES-GCM; keys are rotated every 12 hours - Authentication is done with mutual TLS; certificates are rotated every 90 days (`docker swarm update` allows to change this delay or to use an external CA) - The *data plane* (communication between containers) is not encrypted by default (but this can be activated on a by-network basis, using IPSEC, leveraging hardware crypto if available) .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- class: under-the-hood ## Under the hood: I want to know more! Revisit SwarmKit concepts: - Docker 1.12 Swarm Mode Deep Dive Part 1: Topology ([video](https://www.youtube.com/watch?v=dooPhkXT9yI)) - Docker 1.12 Swarm Mode Deep Dive Part 2: Orchestration ([video](https://www.youtube.com/watch?v=_F6PSP-qhdA)) Some presentations from the Docker Distributed Systems Summit in Berlin: - Heart of the SwarmKit: Topology Management ([slides](https://speakerdeck.com/aluzzardi/heart-of-the-swarmkit-topology-management)) - Heart of the SwarmKit: Store, Topology & Object Model ([slides](http://www.slideshare.net/Docker/heart-of-the-swarmkit-store-topology-object-model)) ([video](https://www.youtube.com/watch?v=EmePhjGnCXY)) And DockerCon Black Belt talks: .blackbelt[DC17US: Everything You Thought You Already Knew About Orchestration ([video](https://www.youtube.com/watch?v=Qsv-q8WbIZY&list=PLkA60AVN3hh-biQ6SCtBJ-WVTyBmmYho8&index=6))] .blackbelt[DC17EU: Container Orchestration from Theory to Practice ([video](https://dockercon.docker.com/watch/5fhwnQxW8on1TKxPwwXZ5r))] .debug[[swarm/creatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/creatingswarm.md)] --- ## Adding more manager nodes - Right now, we have only one manager (node1) - If we lose it, we lose quorum - and that's *very bad!* - Containers running on other nodes will be fine ... - But we won't be able to get or set anything related to the cluster - If the manager is permanently gone, we will have to do a manual repair! - Nobody wants to do that ... so let's make our cluster highly available .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- class: self-paced ## Adding more managers With Play-With-Docker: ```bash TOKEN=$(docker swarm join-token -q manager) for N in $(seq 4 5); do export DOCKER_HOST=tcp://node$N:2375 docker swarm join --token $TOKEN node1:2377 done unset DOCKER_HOST ``` .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- class: in-person ## Building our full cluster - We could SSH to nodes 3, 4, 5; and copy-paste the command -- class: in-person - Or we could use the AWESOME POWER OF THE SHELL! -- class: in-person  -- class: in-person - No, not *that* shell .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- class: in-person ## Let's form like Swarm-tron - Let's get the token, and loop over the remaining nodes with SSH .exercise[ - Obtain the manager token: ```bash TOKEN=$(docker swarm join-token -q manager) ``` - Loop over the 3 remaining nodes: ```bash for NODE in node3 node4 node5; do ssh $NODE docker swarm join --token $TOKEN node1:2377 done ``` ] [That was easy.](https://www.youtube.com/watch?v=3YmMNpbFjp0) .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- ## You can control the Swarm from any manager node .exercise[ - Try the following command on a few different nodes: ```bash docker node ls ``` ] On manager nodes: <br/>you will see the list of nodes, with a `*` denoting the node you're talking to. On non-manager nodes: <br/>you will get an error message telling you that the node is not a manager. As we saw earlier, you can only control the Swarm through a manager node. .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- class: self-paced ## Play-With-Docker node status icon - If you're using Play-With-Docker, you get node status icons - Node status icons are displayed left of the node name - No icon = no Swarm mode detected - Solid blue icon = Swarm manager detected - Blue outline icon = Swarm worker detected  .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- ## Dynamically changing the role of a node - We can change the role of a node on the fly: `docker node promote nodeX` → make nodeX a manager <br/> `docker node demote nodeX` → make nodeX a worker .exercise[ - See the current list of nodes: ``` docker node ls ``` - Promote any worker node to be a manager: ``` docker node promote <node_name_or_id> ``` ] .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- ## How many managers do we need? - 2N+1 nodes can (and will) tolerate N failures <br/>(you can have an even number of managers, but there is no point) -- - 1 manager = no failure - 3 managers = 1 failure - 5 managers = 2 failures (or 1 failure during 1 maintenance) - 7 managers and more = now you might be overdoing it a little bit .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- ## Why not have *all* nodes be managers? - Intuitively, it's harder to reach consensus in larger groups - With Raft, writes have to go to (and be acknowledged by) all nodes - More nodes = more network traffic - Bigger network = more latency .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- ## What would McGyver do? - If some of your machines are more than 10ms away from each other, <br/> try to break them down in multiple clusters (keeping internal latency low) - Groups of up to 9 nodes: all of them are managers - Groups of 10 nodes and up: pick 5 "stable" nodes to be managers <br/> (Cloud pro-tip: use separate auto-scaling groups for managers and workers) - Groups of more than 100 nodes: watch your managers' CPU and RAM - Groups of more than 1000 nodes: - if you can afford to have fast, stable managers, add more of them - otherwise, break down your nodes in multiple clusters .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- ## What's the upper limit? - We don't know! - Internal testing at Docker Inc.: 1000-10000 nodes is fine - deployed to a single cloud region - one of the main take-aways was *"you're gonna need a bigger manager"* - Testing by the community: [4700 heterogenous nodes all over the 'net](https://sematext.com/blog/2016/11/14/docker-swarm-lessons-from-swarm3k/) - it just works - more nodes require more CPU; more containers require more RAM - scheduling of large jobs (70000 containers) is slow, though (working on it!) .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- ## Real-life deployment methods -- Running commands manually over SSH -- (lol jk) -- - Using your favorite configuration management tool - [Docker for AWS](https://docs.docker.com/docker-for-aws/#quickstart) - [Docker for Azure](https://docs.docker.com/docker-for-azure/) .debug[[swarm/morenodes.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/morenodes.md)] --- name: toc-running-our-first-swarm-service class: title Running our first Swarm service .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Running our first Swarm service - How do we run services? Simplified version: `docker run` → `docker service create` .exercise[ - Create a service featuring an Alpine container pinging Google resolvers: ```bash docker service create alpine ping 8.8.8.8 ``` - Check the result: ```bash docker service ps <serviceID> ``` ] .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## `--detach` for service creation (New in Docker Engine 17.05) If you are running Docker 17.05 to 17.09, you will see the following message: ``` Since --detach=false was not specified, tasks will be created in the background. In a future release, --detach=false will become the default. ``` You can ignore that for now; but we'll come back to it in just a few minutes! .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Checking service logs (New in Docker Engine 17.05) - Just like `docker logs` shows the output of a specific local container ... - ... `docker service logs` shows the output of all the containers of a specific service .exercise[ - Check the output of our ping command: ```bash docker service logs <serviceID> ``` ] Flags `--follow` and `--tail` are available, as well as a few others. Note: by default, when a container is destroyed (e.g. when scaling down), its logs are lost. .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- class: extra-details ## Before Docker Engine 17.05 - Docker 1.13/17.03/17.04 have `docker service logs` as an experimental feature <br/>(available only when enabling the experimental feature flag) - We have to use `docker logs`, which only works on local containers - We will have to connect to the node running our container <br/>(unless it was scheduled locally, of course) .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- class: extra-details ## Looking up where our container is running - The `docker service ps` command told us where our container was scheduled .exercise[ - Look up the `NODE` on which the container is running: ```bash docker service ps <serviceID> ``` - If you use Play-With-Docker, switch to that node's tab, or set `DOCKER_HOST` - Otherwise, `ssh` into tht node or use `$(eval docker-machine env node...)` ] .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- class: extra-details ## Viewing the logs of the container .exercise[ - See that the container is running and check its ID: ```bash docker ps ``` - View its logs: ```bash docker logs <containerID> ``` - Go back to `node1` afterwards ] .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Scale our service - Services can be scaled in a pinch with the `docker service update` command .exercise[ - Scale the service to ensure 2 copies per node: ```bash docker service update <serviceID> --replicas 10 --detach=true ``` - Check that we have two containers on the current node: ```bash docker ps ``` ] .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## View deployment progress (New in Docker Engine 17.05) - Commands that create/update/delete services can run with `--detach=false` - The CLI will show the status of the command, and exit once it's done working .exercise[ - Scale the service to ensure 3 copies per node: ```bash docker service update <serviceID> --replicas 15 --detach=false ``` ] Note: with Docker Engine 17.10 and later, `--detach=false` is the default. With versions older than 17.05, you can use e.g.: `watch docker service ps <serviceID>` .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Expose a service - Services can be exposed, with two special properties: - the public port is available on *every node of the Swarm*, - requests coming on the public port are load balanced across all instances. - This is achieved with option `-p/--publish`; as an approximation: `docker run -p → docker service create -p` - If you indicate a single port number, it will be mapped on a port starting at 30000 <br/>(vs. 32768 for single container mapping) - You can indicate two port numbers to set the public port number <br/>(just like with `docker run -p`) .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Expose ElasticSearch on its default port .exercise[ - Create an ElasticSearch service (and give it a name while we're at it): ```bash docker service create --name search --publish 9200:9200 --replicas 7 \ --detach=false elasticsearch`:2` ``` ] Note: don't forget the **:2**! The latest version of the ElasticSearch image won't start without mandatory configuration. .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Tasks lifecycle - During the deployment, you will be able to see multiple states: - assigned (the task has been assigned to a specific node) - preparing (this mostly means "pulling the image") - starting - running - When a task is terminated (stopped, killed...) it cannot be restarted (A replacement task will be created) .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- class: extra-details  .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Test our service - We mapped port 9200 on the nodes, to port 9200 in the containers - Let's try to reach that port! .exercise[ - Try the following command: ```bash curl localhost:9200 ``` ] (If you get `Connection refused`: congratulations, you are very fast indeed! Just try again.) ElasticSearch serves a little JSON document with some basic information about this instance; including a randomly-generated super-hero name. .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Test the load balancing - If we repeat our `curl` command multiple times, we will see different names .exercise[ - Send 10 requests, and see which instances serve them: ```bash for N in $(seq 1 10); do curl -s localhost:9200 | jq .name done ``` ] Note: if you don't have `jq` on your Play-With-Docker instance, just install it: ``` apk add --no-cache jq ``` .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Load balancing results Traffic is handled by our clusters [TCP routing mesh]( https://docs.docker.com/engine/swarm/ingress/). Each request is served by one of the 7 instances, in rotation. Note: if you try to access the service from your browser, you will probably see the same instance name over and over, because your browser (unlike curl) will try to re-use the same connection. .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Under the hood of the TCP routing mesh - Load balancing is done by IPVS - IPVS is a high-performance, in-kernel load balancer - It's been around for a long time (merged in the kernel since 2.4) - Each node runs a local load balancer (Allowing connections to be routed directly to the destination, without extra hops) .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Managing inbound traffic There are many ways to deal with inbound traffic on a Swarm cluster. - Put all (or a subset) of your nodes in a DNS `A` record - Assign your nodes (or a subset) to an ELB - Use a virtual IP and make sure that it is assigned to an "alive" node - etc. .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- class: btw-labels ## Managing HTTP traffic - The TCP routing mesh doesn't parse HTTP headers - If you want to place multiple HTTP services on port 80, you need something more - You can set up NGINX or HAProxy on port 80 to do the virtual host switching - Docker Universal Control Plane provides its own [HTTP routing mesh]( https://docs.docker.com/datacenter/ucp/2.1/guides/admin/configure/use-domain-names-to-access-services/) - add a specific label starting with `com.docker.ucp.mesh.http` to your services - labels are detected automatically and dynamically update the configuration .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- class: btw-labels ## You should use labels - Labels are a great way to attach arbitrary information to services - Examples: - HTTP vhost of a web app or web service - backup schedule for a stateful service - owner of a service (for billing, paging...) - etc. .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Pro-tip for ingress traffic management - It is possible to use *local* networks with Swarm services - This means that you can do something like this: ```bash docker service create --network host --mode global traefik ... ``` (This runs the `traefik` load balancer on each node of your cluster, in the `host` network) - This gives you native performance (no iptables, no proxy, no nothing!) - The load balancer will "see" the clients' IP addresses - But: a container cannot simultaneously be in the `host` network and another network (You will have to route traffic to containers using exposed ports or UNIX sockets) .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- class: extra-details ## Using local networks (`host`, `macvlan` ...) with Swarm services - Using the `host` network is fairly straightforward (With the caveats described on the previous slide) - It is also possible to use drivers like `macvlan` - see [this guide]( https://docs.docker.com/engine/userguide/networking/get-started-macvlan/ ) to get started on `macvlan` - see [this PR](https://github.com/moby/moby/pull/32981) for more information about local network drivers in Swarm mode .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Visualize container placement - Let's leverage the Docker API! .exercise[ - Get the source code of this simple-yet-beautiful visualization app: ```bash cd ~ git clone git://github.com/dockersamples/docker-swarm-visualizer ``` - Build and run the Swarm visualizer: ```bash cd docker-swarm-visualizer docker-compose up -d ``` ] .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Connect to the visualization webapp - It runs a web server on port 8080 .exercise[ - Point your browser to port 8080 of your node1's public ip (If you use Play-With-Docker, click on the (8080) badge) <!-- ```open http://node1:8080``` --> ] - The webapp updates the display automatically (you don't need to reload the page) - It only shows Swarm services (not standalone containers) - It shows when nodes go down - It has some glitches (it's not Carrier-Grade Enterprise-Compliant ISO-9001 software) .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Why This Is More Important Than You Think - The visualizer accesses the Docker API *from within a container* - This is a common pattern: run container management tools *in containers* - Instead of viewing your cluster, this could take care of logging, metrics, autoscaling ... - We can run it within a service, too! We won't do it, but the command would look like: ```bash docker service create \ --mount source=/var/run/docker.sock,type=bind,target=/var/run/docker.sock \ --name viz --constraint node.role==manager ... ``` Credits: the visualization code was written by [Francisco Miranda](https://github.com/maroshii). <br/> [Mano Marks](https://twitter.com/manomarks) adapted it to Swarm and maintains it. .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- ## Terminate our services - Before moving on, we will remove those services - `docker service rm` can accept multiple services names or IDs - `docker service ls` can accept the `-q` flag - A Shell snippet a day keeps the cruft away .exercise[ - Remove all services with this one liner: ```bash docker service ls -q | xargs docker service rm ``` ] .debug[[swarm/firstservice.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/firstservice.md)] --- class: title Our app on Swarm .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## What's on the menu? In this part, we will: - **build** images for our app, - **ship** these images with a registry, - **run** services using these images. .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Why do we need to ship our images? - When we do `docker-compose up`, images are built for our services - These images are present only on the local node - We need these images to be distributed on the whole Swarm - The easiest way to achieve that is to use a Docker registry - Once our images are on a registry, we can reference them when creating our services .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: extra-details ## Build, ship, and run, for a single service If we had only one service (built from a `Dockerfile` in the current directory), our workflow could look like this: ``` docker build -t jpetazzo/doublerainbow:v0.1 . docker push jpetazzo/doublerainbow:v0.1 docker service create jpetazzo/doublerainbow:v0.1 ``` We just have to adapt this to our application, which has 4 services! .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## The plan - Build on our local node (`node1`) - Tag images so that they are named `localhost:5000/servicename` - Upload them to a registry - Create services using the images .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Which registry do we want to use? .small[ - **Docker Hub** - hosted by Docker Inc. - requires an account (free, no credit card needed) - images will be public (unless you pay) - located in AWS EC2 us-east-1 - **Docker Trusted Registry** - self-hosted commercial product - requires a subscription (free 30-day trial available) - images can be public or private - located wherever you want - **Docker open source registry** - self-hosted barebones repository hosting - doesn't require anything - doesn't come with anything either - located wherever you want ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: extra-details ## Using Docker Hub *If we wanted to use the Docker Hub...* <!-- ```meta ^{ ``` --> - We would log into the Docker Hub: ```bash docker login ``` - And in the following slides, we would use our Docker Hub login (e.g. `jpetazzo`) instead of the registry address (i.e. `127.0.0.1:5000`) <!-- ```meta ^} ``` --> .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: extra-details ## Using Docker Trusted Registry *If we wanted to use DTR, we would...* - Make sure we have a Docker Hub account - [Activate a Docker Datacenter subscription]( https://hub.docker.com/enterprise/trial/) - Install DTR on our machines - Use `dtraddress:port/user` instead of the registry address *This is out of the scope of this workshop!* .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Using the open source registry - We need to run a `registry:2` container <br/>(make sure you specify tag `:2` to run the new version!) - It will store images and layers to the local filesystem <br/>(but you can add a config file to use S3, Swift, etc.) - Docker *requires* TLS when communicating with the registry - unless for registries on `127.0.0.0/8` (i.e. `localhost`) - or with the Engine flag `--insecure-registry` <!-- --> - Our strategy: publish the registry container on port 5000, <br/>so that it's available through `127.0.0.1:5000` on each node .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- name: toc-deploying-a-local-registry class: title Deploying a local registry .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- class: manual-btp # Deploying a local registry - We will create a single-instance service, publishing its port on the whole cluster .exercise[ - Create the registry service: ```bash docker service create --name registry --publish 5000:5000 registry:2 ``` - Now try the following command; it should return `{"repositories":[]}`: ```bash curl 127.0.0.1:5000/v2/_catalog ``` ] (If that doesn't work, wait a few seconds and try again.) .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Testing our local registry - We can retag a small image, and push it to the registry .exercise[ - Make sure we have the busybox image, and retag it: ```bash docker pull busybox docker tag busybox 127.0.0.1:5000/busybox ``` - Push it: ```bash docker push 127.0.0.1:5000/busybox ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Checking what's on our local registry - The registry API has endpoints to query what's there .exercise[ - Ensure that our busybox image is now in the local registry: ```bash curl http://127.0.0.1:5000/v2/_catalog ``` ] The curl command should now output: ```json {"repositories":["busybox"]} ``` .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Build, tag, and push our application container images - Compose has named our images `dockercoins_XXX` for each service - We need to retag them (to `127.0.0.1:5000/XXX:v1`) and push them .exercise[ - Set `REGISTRY` and `TAG` environment variables to use our local registry - And run this little for loop: ```bash cd ~/orchestration-workshop/dockercoins REGISTRY=127.0.0.1:5000 TAG=v1 for SERVICE in hasher rng webui worker; do docker tag dockercoins_$SERVICE $REGISTRY/$SERVICE:$TAG docker push $REGISTRY/$SERVICE done ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- name: toc-overlay-networks class: title Overlay networks .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- class: manual-btp # Overlay networks - SwarmKit integrates with overlay networks - Networks are created with `docker network create` - Make sure to specify that you want an *overlay* network <br/>(otherwise you will get a local *bridge* network by default) .exercise[ - Create an overlay network for our application: ```bash docker network create --driver overlay dockercoins ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Viewing existing networks - Let's confirm that our network was created .exercise[ - List existing networks: ```bash docker network ls ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Can you spot the differences? The networks `dockercoins` and `ingress` are different from the other ones. Can you see how? -- class: manual-btp - They are using a different kind of ID, reflecting the fact that they are SwarmKit objects instead of "classic" Docker Engine objects. - Their *scope* is `swarm` instead of `local`. - They are using the overlay driver. .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp, extra-details ## Caveats .warning[In Docker 1.12, you cannot join an overlay network with `docker run --net ...`.] Starting with version 1.13, you can, if the network was created with the `--attachable` flag. *Why is that?* Placing a container on a network requires allocating an IP address for this container. The allocation must be done by a manager node (worker nodes cannot update Raft data). As a result, `docker run --net ...` requires collaboration with manager nodes. It alters the code path for `docker run`, so it is allowed only under strict circumstances. .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Run the application - First, create the `redis` service; that one is using a Docker Hub image .exercise[ - Create the `redis` service: ```bash docker service create --network dockercoins --name redis redis ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Run the other services - Then, start the other services one by one - We will use the images pushed previously .exercise[ - Start the other services: ```bash REGISTRY=127.0.0.1:5000 TAG=v1 for SERVICE in hasher rng webui worker; do docker service create --network dockercoins --detach=true \ --name $SERVICE $REGISTRY/$SERVICE:$TAG done ``` ] ??? ## Wait for our application to be up - We will see later a way to watch progress for all the tasks of the cluster - But for now, a scrappy Shell loop will do the trick .exercise[ - Repeatedly display the status of all our services: ```bash watch "docker service ls -q | xargs -n1 docker service ps" ``` - Stop it once everything is running ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Expose our application web UI - We need to connect to the `webui` service, but it is not publishing any port - Let's reconfigure it to publish a port .exercise[ - Update `webui` so that we can connect to it from outside: ```bash docker service update webui --publish-add 8000:80 --detach=false ``` ] Note: to "de-publish" a port, you would have to specify the container port. </br>(i.e. in that case, `--publish-rm 80`) .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## What happens when we modify a service? - Let's find out what happened to our `webui` service .exercise[ - Look at the tasks and containers associated to `webui`: ```bash docker service ps webui ``` ] -- class: manual-btp The first version of the service (the one that was not exposed) has been shutdown. It has been replaced by the new version, with port 80 accessible from outside. (This will be discussed with more details in the section about stateful services.) .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Connect to the web UI - The web UI is now available on port 8000, *on all the nodes of the cluster* .exercise[ - If you're using Play-With-Docker, just click on the `(8000)` badge - Otherwise, point your browser to any node, on port 8000 ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Scaling the application - We can change scaling parameters with `docker update` as well - We will do the equivalent of `docker-compose scale` .exercise[ - Bring up more workers: ```bash docker service update worker --replicas 10 --detach=false ``` - Check the result in the web UI ] You should see the performance peaking at 10 hashes/s (like before). .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- name: toc-global-scheduling class: title Global scheduling .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- class: manual-btp # Global scheduling - We want to utilize as best as we can the entropy generators on our nodes - We want to run exactly one `rng` instance per node - SwarmKit has a special scheduling mode for that, let's use it - We cannot enable/disable global scheduling on an existing service - We have to destroy and re-create the `rng` service .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Scaling the `rng` service .exercise[ - Remove the existing `rng` service: ```bash docker service rm rng ``` - Re-create the `rng` service with *global scheduling*: ```bash docker service create --name rng --network dockercoins --mode global \ --detach=false $REGISTRY/rng:$TAG ``` - Look at the result in the web UI ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: extra-details, manual-btp ## Why do we have to re-create the service to enable global scheduling? - Enabling it dynamically would make rolling updates semantics very complex - This might change in the future (after all, it was possible in 1.12 RC!) - As of Docker Engine 17.05, other parameters requiring to `rm`/`create` the service are: - service name - hostname - network .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: swarm-ready ## How did we make our app "Swarm-ready"? This app was written in June 2015. (One year before Swarm mode was released.) What did we change to make it compatible with Swarm mode? -- .exercise[ - Go to the app directory: ```bash cd ~/orchestration-workshop/dockercoins ``` - See modifications in the code: ```bash git log -p --since "4-JUL-2015" -- . ':!*.yml*' ':!*.html' ``` <!-- ```wait commit``` --> <!-- ```keys q``` --> ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: swarm-ready ## What did we change in our app since its inception? - Compose files - HTML file (it contains an embedded contextual tweet) - Dockerfiles (to switch to smaller images) - That's it! -- class: swarm-ready *We didn't change a single line of code in this app since it was written.* -- class: swarm-ready *The images that were [built in June 2015]( https://hub.docker.com/r/jpetazzo/dockercoins_worker/tags/) (when the app was written) can still run today ... <br/>... in Swarm mode (distributed across a cluster, with load balancing) ... <br/>... without any modification.* .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: swarm-ready ## How did we design our app in the first place? - [Twelve-Factor App](https://12factor.net/) principles - Service discovery using DNS names - Initially implemented as "links" - Then "ambassadors" - And now "services" - Existing apps might require more changes! .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- name: toc-integration-with-compose class: title Integration with Compose .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- class: manual-btp # Integration with Compose - The previous section showed us how to streamline image build and push - We will now see how to streamline service creation (i.e. get rid of the `for SERVICE in ...; do docker service create ...` part) .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Compose file version 3 (New in Docker Engine 1.13) - Almost identical to version 2 - Can be directly used by a Swarm cluster through `docker stack ...` commands - Introduces a `deploy` section to pass Swarm-specific parameters - Resource limits are moved to this `deploy` section - See [here](https://github.com/aanand/docker.github.io/blob/8524552f99e5b58452fcb1403e1c273385988b71/compose/compose-file.md#upgrading) for the complete list of changes - Supersedes *Distributed Application Bundles* (JSON payload describing an application; could be generated from a Compose file) .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Removing everything - Before deploying using "stacks," let's get a clean slate .exercise[ - Remove *all* the services: ```bash docker service ls -q | xargs docker service rm ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Our first stack We need a registry to move images around. Without a stack file, it would be deployed with the following command: ```bash docker service create --publish 5000:5000 registry:2 ``` Now, we are going to deploy it with the following stack file: ```yaml version: "3" services: registry: image: registry:2 ports: - "5000:5000" ``` .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Checking our stack files - All the stack files that we will use are in the `stacks` directory .exercise[ - Go to the `stacks` directory: ```bash cd ~/orchestration-workshop/stacks ``` - Check `registry.yml`: ```bash cat registry.yml ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Deploying our first stack - All stack manipulation commands start with `docker stack` - Under the hood, they map to `docker service` commands - Stacks have a *name* (which also serves as a namespace) - Stacks are specified with the aforementioned Compose file format version 3 .exercise[ - Deploy our local registry: ```bash docker stack deploy registry --compose-file registry.yml ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Inspecting stacks - `docker stack ps` shows the detailed state of all services of a stack .exercise[ - Check that our registry is running correctly: ```bash docker stack ps registry ``` - Confirm that we get the same output with the following command: ```bash docker service ps registry_registry ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: manual-btp ## Specifics of stack deployment Our registry is not *exactly* identical to the one deployed with `docker service create`! - Each stack gets its own overlay network - Services of the task are connected to this network <br/>(unless specified differently in the Compose file) - Services get network aliases matching their name in the Compose file <br/>(just like when Compose brings up an app specified in a v2 file) - Services are explicitly named `<stack_name>_<service_name>` - Services and tasks also get an internal label indicating which stack they belong to .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: auto-btp ## Testing our local registry - Connecting to port 5000 *on any node of the cluster* routes us to the registry - Therefore, we can use `localhost:5000` or `127.0.0.1:5000` as our registry .exercise[ - Issue the following API request to the registry: ```bash curl 127.0.0.1:5000/v2/_catalog ``` ] It should return: ```json {"repositories":[]} ``` If that doesn't work, retry a few times; perhaps the container is still starting. .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: auto-btp ## Pushing an image to our local registry - We can retag a small image, and push it to the registry .exercise[ - Make sure we have the busybox image, and retag it: ```bash docker pull busybox docker tag busybox 127.0.0.1:5000/busybox ``` - Push it: ```bash docker push 127.0.0.1:5000/busybox ``` ] .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: auto-btp ## Checking what's on our local registry - The registry API has endpoints to query what's there .exercise[ - Ensure that our busybox image is now in the local registry: ```bash curl http://127.0.0.1:5000/v2/_catalog ``` ] The curl command should now output: ```json "repositories":["busybox"]} ``` .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Building and pushing stack services - When using Compose file version 2 and above, you can specify *both* `build` and `image` - When both keys are present: - Compose does "business as usual" (uses `build`) - but the resulting image is named as indicated by the `image` key <br/> (instead of `<projectname>_<servicename>:latest`) - it can be pushed to a registry with `docker-compose push` - Example: ```yaml webfront: build: www image: myregistry.company.net:5000/webfront ``` .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Using Compose to build and push images .exercise[ - Try it: ```bash docker-compose -f dockercoins.yml build docker-compose -f dockercoins.yml push ``` ] Let's have a look at the `dockercoins.yml` file while this is building and pushing. .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ```yaml version: "3" services: rng: build: dockercoins/rng image: ${REGISTRY-127.0.0.1:5000}/rng:${TAG-latest} deploy: mode: global ... redis: image: redis ... worker: build: dockercoins/worker image: ${REGISTRY-127.0.0.1:5000}/worker:${TAG-latest} ... deploy: replicas: 10 ``` .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Deploying the application - Now that the images are on the registry, we can deploy our application stack .exercise[ - Create the application stack: ```bash docker stack deploy dockercoins --compose-file dockercoins.yml ``` ] We can now connect to any of our nodes on port 8000, and we will see the familiar hashing speed graph. .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Maintaining multiple environments There are many ways to handle variations between environments. - Compose loads `docker-compose.yml` and (if it exists) `docker-compose.override.yml` - Compose can load alternate file(s) by setting the `-f` flag or the `COMPOSE_FILE` environment variable - Compose files can *extend* other Compose files, selectively including services: ```yaml web: extends: file: common-services.yml service: webapp ``` See [this documentation page](https://docs.docker.com/compose/extends/) for more details about these techniques. .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- class: extra-details ## Good to know ... - Compose file version 3 adds the `deploy` section - Further versions (3.1, ...) add more features (secrets, configs ...) - You can re-run `docker stack deploy` to update a stack - You can make manual changes with `docker service update` ... - ... But they will be wiped out each time you `docker stack deploy` (That's the intended behavior, when one thinks about it!) - `extends` doesn't work with `docker stack deploy` (But you can use `docker-compose config` to "flatten" your configuration) .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- ## Summary - We've seen how to set up a Swarm - We've used it to host our own registry - We've built our app container images - We've used the registry to host those images - We've deployed and scaled our application - We've seen how to use Compose to streamline deployments - Awesome job, team! .debug[[swarm/ourapponswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/ourapponswarm.md)] --- name: toc-updating-services class: title Updating services .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Updating services - We want to make changes to the web UI - The process is as follows: - edit code - build new image - ship new image - run new image .debug[[swarm/updatingservices.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/updatingservices.md)] --- ## Updating a single service the hard way - To update a single service, we could do the following: ```bash REGISTRY=localhost:5000 TAG=v0.3 IMAGE=$REGISTRY/dockercoins_webui:$TAG docker build -t $IMAGE webui/ docker push $IMAGE docker service update dockercoins_webui --image $IMAGE ``` - Make sure to tag properly your images: update the `TAG` at each iteration (When you check which images are running, you want these tags to be uniquely identifiable) .debug[[swarm/updatingservices.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/updatingservices.md)] --- ## Updating services the easy way - With the Compose integration, all we have to do is: ```bash export TAG=v0.3 docker-compose -f composefile.yml build docker-compose -f composefile.yml push docker stack deploy -c composefile.yml nameofstack ``` -- - That's exactly what we used earlier to deploy the app - We don't need to learn new commands! .debug[[swarm/updatingservices.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/updatingservices.md)] --- ## Updating the web UI - Let's make the numbers on the Y axis bigger! .exercise[ - Edit the file `webui/files/index.html`: ```bash vi dockercoins/webui/files/index.html ``` <!-- ```wait <title>``` --> - Locate the `font-size` CSS attribute and increase it (at least double it) <!-- ```keys /font-size``` ```keys ^J``` ```keys lllllllllllllcw45px``` ```keys ^[``` ] ```keys :wq``` ```keys ^J``` --> - Save and exit - Build, ship, and run: ```bash export TAG=v0.3 docker-compose -f dockercoins.yml build docker-compose -f dockercoins.yml push docker stack deploy -c dockercoins.yml dockercoins ``` ] .debug[[swarm/updatingservices.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/updatingservices.md)] --- ## Viewing our changes - Wait at least 10 seconds (for the new version to be deployed) - Then reload the web UI - Or just mash "reload" frantically - ... Eventually the legend on the left will be bigger! .debug[[swarm/updatingservices.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/updatingservices.md)] --- class: title, in-person Operating the Swarm .debug[[swarm/operatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/operatingswarm.md)] --- name: part-2 class: title, self-paced Part 2 .debug[[swarm/operatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/operatingswarm.md)] --- class: self-paced ## Before we start ... The following exercises assume that you have a 5-nodes Swarm cluster. If you come here from a previous tutorial and still have your cluster: great! Otherwise: check [part 1](#part-1) to learn how to set up your own cluster. We pick up exactly where we left you, so we assume that you have: - a five nodes Swarm cluster, - a self-hosted registry, - DockerCoins up and running. The next slide has a cheat sheet if you need to set that up in a pinch. .debug[[swarm/operatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/operatingswarm.md)] --- class: self-paced ## Catching up Assuming you have 5 nodes provided by [Play-With-Docker](http://www.play-with-docker/), do this from `node1`: ```bash docker swarm init --advertise-addr eth0 TOKEN=$(docker swarm join-token -q manager) for N in $(seq 2 5); do DOCKER_HOST=tcp://node$N:2375 docker swarm join --token $TOKEN node1:2377 done git clone git://github.com/jpetazzo/orchestration-workshop cd orchestration-workshop/stacks docker stack deploy --compose-file registry.yml registry docker-compose -f dockercoins.yml build docker-compose -f dockercoins.yml push docker stack deploy --compose-file dockercoins.yml dockercoins ``` You should now be able to connect to port 8000 and see the DockerCoins web UI. .debug[[swarm/operatingswarm.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/operatingswarm.md)] --- name: toc-secrets-management-and-encryption-at-rest class: title Secrets management and encryption at rest .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Secrets management and encryption at rest (New in Docker Engine 1.13) - Secrets management = selectively and securely bring secrets to services - Encryption at rest = protect against storage theft or prying - Remember: - control plane is authenticated through mutual TLS, certs rotated every 90 days - control plane is encrypted with AES-GCM, keys rotated every 12 hours - data plane is not encrypted by default (for performance reasons), <br/>but we saw earlier how to enable that with a single flag .debug[[swarm/security.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/security.md)] --- name: toc-least-privilege-model class: title Least privilege model .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Least privilege model - All the important data is stored in the "Raft log" - Managers nodes have read/write access to this data - Workers nodes have no access to this data - Workers only receive the minimum amount of data that they need: - which services to run - network configuration information for these services - credentials for these services - Compromising a worker node does not give access to the full cluster .debug[[swarm/leastprivilege.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/leastprivilege.md)] --- ## What can I do if I compromise a worker node? - I can enter the containers running on that node - I can access the configuration and credentials used by these containers - I can inspect the network traffic of these containers - I cannot inspect or disrupt the network traffic of other containers (network information is provided by manager nodes; ARP spoofing is not possible) - I cannot infer the topology of the cluster and its number of nodes - I can only learn the IP addresses of the manager nodes .debug[[swarm/leastprivilege.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/leastprivilege.md)] --- ## Guidelines for workload isolation leveraging least privilege model - Define security levels - Define security zones - Put managers in the highest security zone - Enforce workloads of a given security level to run in a given zone - Enforcement can be done with [Authorization Plugins](https://docs.docker.com/engine/extend/plugins_authorization/) .debug[[swarm/leastprivilege.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/leastprivilege.md)] --- ## Learning more about container security .blackbelt[DC17US: Securing Containers, One Patch At A Time ([video](https://www.youtube.com/watch?v=jZSs1RHwcqo&list=PLkA60AVN3hh-biQ6SCtBJ-WVTyBmmYho8&index=4))] .blackbelt[DC17EU: Container-relevant Upstream Kernel Developments ([video](https://dockercon.docker.com/watch/7JQBpvHJwjdW6FKXvMfCK1))] .blackbelt[DC17EU: What Have Syscalls Done for you Lately? ([video](https://dockercon.docker.com/watch/4ZxNyWuwk9JHSxZxgBBi6J))] .debug[[swarm/leastprivilege.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/leastprivilege.md)] --- ## A reminder about *scope* - Out of the box, Docker API access is "all or nothing" - When someone has access to the Docker API, they can access *everything* - If your developers are using the Docker API to deploy on the dev cluster ... ... and the dev cluster is the same as the prod cluster ... ... it means that your devs have access to your production data, passwords, etc. - This can easily be avoided .debug[[swarm/apiscope.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/apiscope.md)] --- ## Fine-grained API access control A few solutions, by increasing order of flexibility: - Use separate clusters for different security perimeters (And different credentials for each cluster) -- - Add an extra layer of abstraction (sudo scripts, hooks, or full-blown PAAS) -- - Enable [authorization plugins] - each API request is vetted by your plugin(s) - by default, the *subject name* in the client TLS certificate is used as user name - example: [user and permission management] in [UCP] [authorization plugins]: https://docs.docker.com/engine/extend/plugins_authorization/ [UCP]: https://docs.docker.com/datacenter/ucp/2.1/guides/ [user and permission management]: https://docs.docker.com/datacenter/ucp/2.1/guides/admin/manage-users/ .debug[[swarm/apiscope.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/apiscope.md)] --- name: toc-centralized-logging class: title Centralized logging .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- name: logging # Centralized logging - We want to send all our container logs to a central place - If that place could offer a nice web dashboard too, that'd be nice -- - We are going to deploy an ELK stack - It will accept logs over a GELF socket - We will update our services to send logs through the GELF logging driver .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- name: toc-setting-up-elk-to-store-container-logs class: title Setting up ELK to store container logs .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Setting up ELK to store container logs *Important foreword: this is not an "official" or "recommended" setup; it is just an example. We used ELK in this demo because it's a popular setup and we keep being asked about it; but you will have equal success with Fluent or other logging stacks!* What we will do: - Spin up an ELK stack with services - Gaze at the spiffy Kibana web UI - Manually send a few log entries using one-shot containers - Set our containers up to send their logs to Logstash .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## What's in an ELK stack? - ELK is three components: - ElasticSearch (to store and index log entries) - Logstash (to receive log entries from various sources, process them, and forward them to various destinations) - Kibana (to view/search log entries with a nice UI) - The only component that we will configure is Logstash - We will accept log entries using the GELF protocol - Log entries will be stored in ElasticSearch, <br/>and displayed on Logstash's stdout for debugging .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- class: elk-manual ## Setting up ELK - We need three containers: ElasticSearch, Logstash, Kibana - We will place them on a common network, `logging` .exercise[ - Create the network: ```bash docker network create --driver overlay logging ``` - Create the ElasticSearch service: ```bash docker service create --network logging --name elasticsearch elasticsearch:2.4 ``` ] .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- class: elk-manual ## Setting up Kibana - Kibana exposes the web UI - Its default port (5601) needs to be published - It needs a tiny bit of configuration: the address of the ElasticSearch service - We don't want Kibana logs to show up in Kibana (it would create clutter) <br/>so we tell Logspout to ignore them .exercise[ - Create the Kibana service: ```bash docker service create --network logging --name kibana --publish 5601:5601 \ -e ELASTICSEARCH_URL=http://elasticsearch:9200 kibana:4.6 ``` ] .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- class: elk-manual ## Setting up Logstash - Logstash needs some configuration to listen to GELF messages and send them to ElasticSearch - We could author a custom image bundling this configuration - We can also pass the [configuration](https://github.com/jpetazzo/orchestration-workshop/blob/master/elk/logstash.conf) on the command line .exercise[ - Create the Logstash service: ```bash docker service create --network logging --name logstash -p 12201:12201/udp \ logstash:2.4 -e "$(cat ~/orchestration-workshop/elk/logstash.conf)" ``` ] .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- class: elk-manual ## Checking Logstash - Before proceeding, let's make sure that Logstash started properly .exercise[ - Lookup the node running the Logstash container: ```bash docker service ps logstash ``` - Connect to that node ] .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- class: elk-manual ## View Logstash logs .exercise[ - View the logs of the logstash service: ```bash docker service logs logstash --follow ``` <!-- ```wait "message" => "ok"``` --> <!-- ```keys ^C``` --> ] You should see the heartbeat messages: .small[ ```json { "message" => "ok", "host" => "1a4cfb063d13", "@version" => "1", "@timestamp" => "2016-06-19T00:45:45.273Z" } ``` ] .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- class: elk-auto ## Deploying our ELK cluster - We will use a stack file .exercise[ - Build, ship, and run our ELK stack: ```bash docker-compose -f elk.yml build docker-compose -f elk.yml push docker stack deploy elk -c elk.yml ``` ] Note: the *build* and *push* steps are not strictly necessary, but they don't hurt! Let's have a look at the [Compose file]( https://github.com/jpetazzo/orchestration-workshop/blob/master/stacks/elk.yml). .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- class: elk-auto ## Checking that our ELK stack works correctly - Let's view the logs of logstash (Who logs the loggers?) .exercise[ - Stream logstash's logs: ```bash docker service logs --follow --tail 1 elk_logstash ``` ] You should see the heartbeat messages: .small[ ```json { "message" => "ok", "host" => "1a4cfb063d13", "@version" => "1", "@timestamp" => "2016-06-19T00:45:45.273Z" } ``` ] .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## Testing the GELF receiver - In a new window, we will generate a logging message - We will use a one-off container, and Docker's GELF logging driver .exercise[ - Send a test message: ```bash docker run --log-driver gelf --log-opt gelf-address=udp://127.0.0.1:12201 \ --rm alpine echo hello ``` ] The test message should show up in the logstash container logs. .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## Sending logs from a service - We were sending from a "classic" container so far; let's send logs from a service instead - We're lucky: the parameters (`--log-driver` and `--log-opt`) are exactly the same! .exercise[ - Send a test message: ```bash docker service create \ --log-driver gelf --log-opt gelf-address=udp://127.0.0.1:12201 \ alpine echo hello ``` <!-- ```wait Detected task failure``` --> <!-- ```keys ^C``` --> ] The test message should show up as well in the logstash container logs. -- In fact, *multiple messages will show up, and continue to show up every few seconds!* .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## Restart conditions - By default, if a container exits (or is killed with `docker kill`, or runs out of memory ...), the Swarm will restart it (possibly on a different machine) - This behavior can be changed by setting the *restart condition* parameter .exercise[ - Change the restart condition so that Swarm doesn't try to restart our container forever: ```bash docker service update `xxx` --restart-condition none ``` ] Available restart conditions are `none`, `any`, and `on-error`. You can also set `--restart-delay`, `--restart-max-attempts`, and `--restart-window`. .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## Connect to Kibana - The Kibana web UI is exposed on cluster port 5601 .exercise[ - Connect to port 5601 of your cluster - if you're using Play-With-Docker, click on the (5601) badge above the terminal - otherwise, open http://(any-node-address):5601/ with your browser ] .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## "Configuring" Kibana - If you see a status page with a yellow item, wait a minute and reload (Kibana is probably still initializing) - Kibana should offer you to "Configure an index pattern": <br/>in the "Time-field name" drop down, select "@timestamp", and hit the "Create" button - Then: - click "Discover" (in the top-left corner) - click "Last 15 minutes" (in the top-right corner) - click "Last 1 hour" (in the list in the middle) - click "Auto-refresh" (top-right corner) - click "5 seconds" (top-left of the list) - You should see a series of green bars (with one new green bar every minute) .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## Updating our services to use GELF - We will now inform our Swarm to add GELF logging to all our services - This is done with the `docker service update` command - The logging flags are the same as before .exercise[ - Enable GELF logging for the `rng` service: ```bash docker service update dockercoins_rng \ --log-driver gelf --log-opt gelf-address=udp://127.0.0.1:12201 ``` ] After ~15 seconds, you should see the log messages in Kibana. .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## Viewing container logs - Go back to Kibana - Container logs should be showing up! - We can customize the web UI to be more readable .exercise[ - In the left column, move the mouse over the following columns, and click the "Add" button that appears: - host - container_name - message <!-- - logsource - program - message --> ] .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## .warning[Don't update stateful services!] - What would have happened if we had updated the Redis service? - When a service changes, SwarmKit replaces existing container with new ones - This is fine for stateless services - But if you update a stateful service, its data will be lost in the process - If we updated our Redis service, all our DockerCoins would be lost .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- ## Important afterword **This is not a "production-grade" setup.** It is just an educational example. We did set up a single ElasticSearch instance and a single Logstash instance. In a production setup, you need an ElasticSearch cluster (both for capacity and availability reasons). You also need multiple Logstash instances. And if you want to withstand bursts of logs, you need some kind of message queue: Redis if you're cheap, Kafka if you want to make sure that you don't drop messages on the floor. Good luck. If you want to learn more about the GELF driver, have a look at [this blog post]( http://jpetazzo.github.io/2017/01/20/docker-logging-gelf/). .debug[[swarm/logging.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/logging.md)] --- name: toc-metrics-collection class: title Metrics collection .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- # Metrics collection - We want to gather metrics in a central place - We will gather node metrics and container metrics - We want a nice interface to view them (graphs) .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- ## Node metrics - CPU, RAM, disk usage on the whole node - Total number of processes running, and their states - Number of open files, sockets, and their states - I/O activity (disk, network), per operation or volume - Physical/hardware (when applicable): temperature, fan speed ... - ... and much more! .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- ## Container metrics - Similar to node metrics, but not totally identical - RAM breakdown will be different - active vs inactive memory - some memory is *shared* between containers, and accounted specially - I/O activity is also harder to track - async writes can cause deferred "charges" - some page-ins are also shared between containers For details about container metrics, see: <br/> http://jpetazzo.github.io/2013/10/08/docker-containers-metrics/ .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap, prom ## Tools We will build *two* different metrics pipelines: - One based on Intel Snap, - Another based on Prometheus. If you're using Play-With-Docker, skip the exercises relevant to Intel Snap (we rely on a SSH server to deploy, and PWD doesn't have that yet). .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## First metrics pipeline We will use three open source Go projects for our first metrics pipeline: - Intel Snap Collects, processes, and publishes metrics - InfluxDB Stores metrics - Grafana Displays metrics visually .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Snap - [github.com/intelsdi-x/snap](https://github.com/intelsdi-x/snap) - Can collect, process, and publish metric data - Doesn’t store metrics - Works as a daemon (snapd) controlled by a CLI (snapctl) - Offloads collecting, processing, and publishing to plugins - Does nothing out of the box; configuration required! - Docs: https://github.com/intelsdi-x/snap/blob/master/docs/ .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## InfluxDB - Snap doesn't store metrics data - InfluxDB is specifically designed for time-series data - CRud vs. CRUD (you rarely if ever update/delete data) - orthogonal read and write patterns - storage format optimization is key (for disk usage and performance) - Snap has a plugin allowing to *publish* to InfluxDB .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Grafana - Snap cannot show graphs - InfluxDB cannot show graphs - Grafana will take care of that - Grafana can read data from InfluxDB and display it as graphs .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Getting and setting up Snap - We will install Snap directly on the nodes - Release tarballs are available from GitHub - We will use a *global service* <br/>(started on all nodes, including nodes added later) - This service will download and unpack Snap in /opt and /usr/local - /opt and /usr/local will be bind-mounted from the host - This service will effectively install Snap on the hosts .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## The Snap installer service - This will get Snap on all nodes .exercise[ ```bash docker service create --restart-condition=none --mode global \ --mount type=bind,source=/usr/local/bin,target=/usr/local/bin \ --mount type=bind,source=/opt,target=/opt centos sh -c ' SNAPVER=v0.16.1-beta RELEASEURL=https://github.com/intelsdi-x/snap/releases/download/$SNAPVER curl -sSL $RELEASEURL/snap-$SNAPVER-linux-amd64.tar.gz | tar -C /opt -zxf- curl -sSL $RELEASEURL/snap-plugins-$SNAPVER-linux-amd64.tar.gz | tar -C /opt -zxf- ln -s snap-$SNAPVER /opt/snap for BIN in snapd snapctl; do ln -s /opt/snap/bin/$BIN /usr/local/bin/$BIN; done ' # If you copy-paste that block, do not forget that final quote ☺ ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## First contact with `snapd` - The core of Snap is `snapd`, the Snap daemon - Application made up of a REST API, control module, and scheduler module .exercise[ - Start `snapd` with plugin trust disabled and log level set to debug: ```bash snapd -t 0 -l 1 ``` ] - More resources: https://github.com/intelsdi-x/snap/blob/master/docs/SNAPD.md https://github.com/intelsdi-x/snap/blob/master/docs/SNAPD_CONFIGURATION.md .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Using `snapctl` to interact with `snapd` - Let's load a *collector* and a *publisher* plugins .exercise[ - Open a new terminal - Load the psutil collector plugin: ```bash snapctl plugin load /opt/snap/plugin/snap-plugin-collector-psutil ``` - Load the file publisher plugin: ```bash snapctl plugin load /opt/snap/plugin/snap-plugin-publisher-mock-file ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Checking what we've done - Good to know: Docker CLI uses `ls`, Snap CLI uses `list` .exercise[ - See your loaded plugins: ```bash snapctl plugin list ``` - See the metrics you can collect: ```bash snapctl metric list ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Actually collecting metrics: introducing *tasks* - To start collecting/processing/publishing metric data, you need to create a *task* - A *task* indicates: - *what* to collect (which metrics) - *when* to collect it (e.g. how often) - *how* to process it (e.g. use it directly, or compute moving averages) - *where* to publish it - Tasks can be defined with manifests written in JSON or YAML - Some plugins, such as the Docker collector, allow for wildcards (\*) in the metrics "path" <br/>(see snap/docker-influxdb.json) - More resources: https://github.com/intelsdi-x/snap/blob/master/docs/TASKS.md .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Our first task manifest ```yaml version: 1 schedule: type: "simple" # collect on a set interval interval: "1s" # of every 1s max-failures: 10 workflow: collect: # first collect metrics: # metrics to collect /intel/psutil/load/load1: {} config: # there is no configuration publish: # after collecting, publish - plugin_name: "file" # use the file publisher config: file: "/tmp/snap-psutil-file.log" # write to this file ``` .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Creating our first task - The task manifest shown on the previous slide is stored in `snap/psutil-file.yml`. .exercise[ - Create a task using the manifest: ```bash cd ~/orchestration-workshop/snap snapctl task create -t psutil-file.yml ``` ] The output should look like the following: ``` Using task manifest to create task Task created ID: 240435e8-a250-4782-80d0-6fff541facba Name: Task-240435e8-a250-4782-80d0-6fff541facba State: Running ``` .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Checking existing tasks .exercise[ - This will confirm that our task is running correctly, and remind us of its task ID ```bash snapctl task list ``` ] The output should look like the following: ``` ID NAME STATE HIT MISS FAIL CREATED 24043...acba Task-24043...acba Running 4 0 0 2:34PM 8-13-2016 ``` .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Viewing our task dollars at work - The task is using a very simple publisher, `mock-file` - That publisher just writes text lines in a file (one line per data point) .exercise[ - Check that the data is flowing indeed: ```bash tail -f /tmp/snap-psutil-file.log ``` ] To exit, hit `^C` .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Debugging tasks - When a task is not directly writing to a local file, use `snapctl task watch` - `snapctl task watch` will stream the metrics you are collecting to STDOUT .exercise[ ```bash snapctl task watch <ID> ``` ] To exit, hit `^C` .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Stopping snap - Our Snap deployment has a few flaws: - snapd was started manually - it is running on a single node - the configuration is purely local -- class: snap - We want to change that! -- class: snap - But first, go back to the terminal where `snapd` is running, and hit `^C` - All tasks will be stopped; all plugins will be unloaded; Snap will exit .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Snap Tribe Mode - Tribe is Snap's clustering mechanism - When tribe mode is enabled, nodes can join *agreements* - When a node in an *agreement* does something (e.g. load a plugin or run a task), <br/>other nodes of that agreement do the same thing - We will use it to load the Docker collector and InfluxDB publisher on all nodes, <br/>and run a task to use them - Without tribe mode, we would have to load plugins and run tasks manually on every node - More resources: https://github.com/intelsdi-x/snap/blob/master/docs/TRIBE.md .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Running Snap itself on every node - Snap runs in the foreground, so you need to use `&` or start it in tmux .exercise[ - Run the following command *on every node:* ```bash snapd -t 0 -l 1 --tribe --tribe-seed node1:6000 ``` ] If you're *not* using Play-With-Docker, there is another way to start Snap! .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Starting a daemon through SSH .warning[Hackety hack ahead!] - We will create a *global service* - That global service will install a SSH client - With that SSH client, the service will connect back to its local node <br/>(i.e. "break out" of the container, using the SSH key that we provide) - Once logged on the node, the service starts snapd with Tribe Mode enabled .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Running Snap itself on every node - I might go to hell for showing you this, but here it goes ... .exercise[ - Start Snap all over the place: ```bash docker service create --name snapd --mode global \ --mount type=bind,source=$HOME/.ssh/id_rsa,target=/sshkey \ alpine sh -c " apk add --no-cache openssh-client && ssh -o StrictHostKeyChecking=no -i /sshkey docker@172.17.0.1 \ sudo snapd -t 0 -l 1 --tribe --tribe-seed node1:6000 " # If you copy-paste that block, don't forget that final quote :-) ``` ] Remember: this *does not work* with Play-With-Docker (which doesn't have SSH). .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Viewing the members of our tribe - If everything went fine, Snap is now running in tribe mode .exercise[ - View the members of our tribe: ```bash snapctl member list ``` ] This should show the 5 nodes with their hostnames. .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Create an agreement - We can now create an *agreement* for our plugins and tasks .exercise[ - Create an agreement; make sure to use the same name all along: ```bash snapctl agreement create docker-influxdb ``` ] The output should look like the following: ``` Name Number of Members plugins tasks docker-influxdb 0 0 0 ``` .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Instruct all nodes to join the agreement - We dont need another fancy global service! - We can join nodes from any existing node of the cluster .exercise[ - Add all nodes to the agreement: ```bash snapctl member list | tail -n +2 | xargs -n1 snapctl agreement join docker-influxdb ``` ] The last bit of output should look like the following: ``` Name Number of Members plugins tasks docker-influxdb 5 0 0 ``` .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Start a container on every node - The Docker plugin requires at least one container to be started - Normally, at this point, you will have at least one container on each node - But just in case you did things differently, let's create a dummy global service .exercise[ - Create an alpine container on the whole cluster: ```bash docker service create --name ping --mode global alpine ping 8.8.8.8 ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Running InfluxDB - We will create a service for InfluxDB - We will use the official image - InfluxDB uses multiple ports: - 8086 (HTTP API; we need this) - 8083 (admin interface; we need this) - 8088 (cluster communication; not needed here) - more ports for other protocols (graphite, collectd...) - We will just publish the first two .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Creating the InfluxDB service .exercise[ - Start an InfluxDB service, publishing ports 8083 and 8086: ```bash docker service create --name influxdb \ --publish 8083:8083 \ --publish 8086:8086 \ influxdb:0.13 ``` ] Note: this will allow any node to publish metrics data to `localhost:8086`, and it will allows us to access the admin interface by connecting to any node on port 8083. .warning[Make sure to use InfluxDB 0.13; a few things changed in 1.0 (like, the name of the default retention policy is now "autogen") and this breaks a few things.] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Setting up InfluxDB - We need to create the "snap" database .exercise[ - Open port 8083 with your browser - Enter the following query in the query box: ``` CREATE DATABASE "snap" ``` - In the top-right corner, select "Database: snap" ] Note: the InfluxDB query language *looks like* SQL but it's not. ??? ## Setting a retention policy - When graduating to 1.0, InfluxDB changed the name of the default policy - It used to be "default" and it is now "autogen" - Snap still uses "default" and this results in errors .exercise[ - Create a "default" retention policy by entering the following query in the box: ``` CREATE RETENTION POLICY "default" ON "snap" DURATION 1w REPLICATION 1 ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Load Docker collector and InfluxDB publisher - We will load plugins on the local node - Since our local node is a member of the agreement, all other nodes in the agreement will also load these plugins .exercise[ - Load Docker collector: ```bash snapctl plugin load /opt/snap/plugin/snap-plugin-collector-docker ``` - Load InfluxDB publisher: ```bash snapctl plugin load /opt/snap/plugin/snap-plugin-publisher-influxdb ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Start a simple collection task - Again, we will create a task on the local node - The task will be replicated on other nodes members of the same agreement .exercise[ - Load a task manifest file collecting a couple of metrics on all containers, <br/>and sending them to InfluxDB: ```bash cd ~/orchestration-workshop/snap snapctl task create -t docker-influxdb.json ``` ] Note: the task description sends metrics to the InfluxDB API endpoint located at 127.0.0.1:8086. Since the InfluxDB container is published on port 8086, 127.0.0.1:8086 always routes traffic to the InfluxDB container. .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## If things go wrong... Note: if a task runs into a problem (e.g. it's trying to publish to a metrics database, but the database is unreachable), the task will be stopped. You will have to restart it manually by running: ```bash snapctl task enable <ID> snapctl task start <ID> ``` This must be done *per node*. Alternatively, you can delete+re-create the task (it will delete+re-create on all nodes). .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Check that metric data shows up in InfluxDB - Let's check existing data with a few manual queries in the InfluxDB admin interface .exercise[ - List "measurements": ``` SHOW MEASUREMENTS ``` (This should show two generic entries corresponding to the two collected metrics.) - View time series data for one of the metrics: ``` SELECT * FROM "intel/docker/stats/cgroups/cpu_stats/cpu_usage/total_usage" ``` (This should show a list of data points with **time**, **docker_id**, **source**, and **value**.) ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Deploy Grafana - We will use an almost-official image, `grafana/grafana` - We will publish Grafana's web interface on its default port (3000) .exercise[ - Create the Grafana service: ```bash docker service create --name grafana --publish 3000:3000 grafana/grafana:3.1.1 ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Set up Grafana .exercise[ - Open port 3000 with your browser - Identify with "admin" as the username and password - Click on the Grafana logo (the orange spiral in the top left corner) - Click on "Data Sources" - Click on "Add data source" (green button on the right) ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Add InfluxDB as a data source for Grafana .small[ Fill the form exactly as follows: - Name = "snap" - Type = "InfluxDB" In HTTP settings, fill as follows: - Url = "http://(IP.address.of.any.node):8086" - Access = "direct" - Leave HTTP Auth untouched In InfluxDB details, fill as follows: - Database = "snap" - Leave user and password blank Finally, click on "add", you should see a green message saying "Success - Data source is working". If you see an orange box (sometimes without a message), it means that you got something wrong. Triple check everything again. ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap  .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Create a dashboard in Grafana .exercise[ - Click on the Grafana logo again (the orange spiral in the top left corner) - Hover over "Dashboards" - Click "+ New" - Click on the little green rectangle that appeared in the top left - Hover over "Add Panel" - Click on "Graph" ] At this point, you should see a sample graph showing up. .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap ## Setting up a graph in Grafana .exercise[ - Panel data source: select snap - Click on the SELECT metrics query to expand it - Click on "select measurement" and pick CPU usage - Click on the "+" right next to "WHERE" - Select "docker_id" - Select the ID of a container of your choice (e.g. the one running InfluxDB) - Click on the "+" on the right of the "SELECT" line - Add "derivative" - In the "derivative" option, select "1s" - In the top right corner, click on the clock, and pick "last 5 minutes" ] Congratulations, you are viewing the CPU usage of a single container! .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap  .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap, prom ## Before moving on ... - Leave that tab open! - We are going to set up *another* metrics system - ... And then compare both graphs side by side .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: snap, prom ## Prometheus vs. Snap - Prometheus is another metrics collection system - Snap *pushes* metrics; Prometheus *pulls* them .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Prometheus components - The *Prometheus server* pulls, stores, and displays metrics - Its configuration defines a list of *exporter* endpoints <br/>(that list can be dynamic, using e.g. Consul, DNS, Etcd...) - The exporters expose metrics over HTTP using a simple line-oriented format (An optimized format using protobuf is also possible) .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## It's all about the `/metrics` - This is was the *node exporter* looks like: http://demo.robustperception.io:9100/metrics - Prometheus itself exposes its own internal metrics, too: http://demo.robustperception.io:9090/metrics - A *Prometheus server* will *scrape* URLs like these (It can also use protobuf to avoid the overhead of parsing line-oriented formats!) .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-manual ## Collecting metrics with Prometheus on Swarm - We will run two *global services* (i.e. scheduled on all our nodes): - the Prometheus *node exporter* to get node metrics - Google's cAdvisor to get container metrics - We will run a Prometheus server to scrape these exporters - The Prometheus server will be configured to use DNS service discovery - We will use `tasks.<servicename>` for service discovery - All these services will be placed on a private internal network .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-manual ## Creating an overlay network for Prometheus - This is the easiest step ☺ .exercise[ - Create an overlay network: ```bash docker network create --driver overlay prom ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-manual ## Running the node exporter - The node exporter *should* run directly on the hosts - However, it can run from a container, if configured properly <br/> (it needs to access the host's filesystems, in particular /proc and /sys) .exercise[ - Start the node exporter: ```bash docker service create --name node --mode global --network prom \ --mount type=bind,source=/proc,target=/host/proc \ --mount type=bind,source=/sys,target=/host/sys \ --mount type=bind,source=/,target=/rootfs \ prom/node-exporter \ --path.procfs /host/proc \ --path.sysfs /host/proc \ --collector.filesystem.ignored-mount-points "^/(sys|proc|dev|host|etc)($|/)" ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-manual ## Running cAdvisor - Likewise, cAdvisor *should* run directly on the hosts - But it can run in containers, if configured properly .exercise[ - Start the cAdvisor collector: ```bash docker service create --name cadvisor --network prom --mode global \ --mount type=bind,source=/,target=/rootfs \ --mount type=bind,source=/var/run,target=/var/run \ --mount type=bind,source=/sys,target=/sys \ --mount type=bind,source=/var/lib/docker,target=/var/lib/docker \ google/cadvisor:latest ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-manual ## Configuring the Prometheus server This will be our configuration file for Prometheus: ```yaml global: scrape_interval: 10s scrape_configs: - job_name: 'prometheus' static_configs: - targets: ['localhost:9090'] - job_name: 'node' dns_sd_configs: - names: ['tasks.node'] type: 'A' port: 9100 - job_name: 'cadvisor' dns_sd_configs: - names: ['tasks.cadvisor'] type: 'A' port: 8080 ``` .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-manual ## Passing the configuration to the Prometheus server - We need to provide our custom configuration to the Prometheus server - The easiest solution is to create a custom image bundling this configuration - We will use a very simple Dockerfile: ```dockerfile FROM prom/prometheus:v1.4.1 COPY prometheus.yml /etc/prometheus/prometheus.yml ``` (The configuration file, and the Dockerfile, are in the `prom` subdirectory) - We will build this image, and push it to our local registry - Then we will create a service using this image Note: it is also possible to use a `config` to inject that configuration file without having to create this ad-hoc image. .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-manual ## Building our custom Prometheus image - We will use the local registry started previously on 127.0.0.1:5000 .exercise[ - Build the image using the provided Dockerfile: ```bash docker build -t 127.0.0.1:5000/prometheus ~/orchestration-workshop/prom ``` - Push the image to our local registry: ```bash docker push 127.0.0.1:5000/prometheus ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-manual ## Running our custom Prometheus image - That's the only service that needs to be published (If we want to access Prometheus from outside!) .exercise[ - Start the Prometheus server: ```bash docker service create --network prom --name prom \ --publish 9090:9090 127.0.0.1:5000/prometheus ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-auto ## Deploying Prometheus on our cluster - We will use a stack definition (once again) .exercise[ - Make sure we are in the stacks directory: ```bash cd ~/orchestration-workshop/stacks ``` - Build, ship, and run the Prometheus stack: ```bash docker-compose -f prometheus.yml build docker-compose -f prometheus.yml push docker stack deploy -c prometheus.yml prometheus ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Checking our Prometheus server - First, let's make sure that Prometheus is correctly scraping all metrics .exercise[ - Open port 9090 with your browser - Click on "status", then "targets" ] You should see 11 endpoints (5 cadvisor, 5 node, 1 prometheus). Their state should be "UP". .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-auto, config ## Injecting a configuration file (New in Docker Engine 17.06) - We are creating a custom image *just to inject a configuration* - Instead, we could use the base Prometheus image + a `config` - A `config` is a blob (usually, a configuration file) that: - is created and managed through the Docker API (and CLI) - gets persisted into the Raft log (i.e. safely) - can be associated to a service <br/> (this injects the blob as a plain file in the service's containers) .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-auto, config ## Differences between `config` and `secret` The two are very similar, but ... - `configs`: - can be injected to any filesystem location - can be viewed and extracted using the Docker API or CLI - `secrets`: - can only be injected into `/run/secrets` - are never stored in clear text on disk - cannot be viewed or extracted with the Docker API or CLI .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-auto, config ## Deploying Prometheus with a `config` - The `config` can be created manually or declared in the Compose file - This is what our new Compose file looks like: .small[ ```yaml version: "3.3" services: prometheus: image: prom/prometheus:v1.4.1 ports: - "9090:9090" configs: - source: prometheus target: /etc/prometheus/prometheus.yml ... configs: prometheus: file: ../prom/prometheus.yml ``` ] (This is from `prometheus+config.yml`) .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-auto, config ## Specifying a `config` in a Compose file - In each service, an optional `configs` section can list as many configs as you want - Each config can specify: - an optional `target` (path to inject the configuration; by default: root of the container) - ownership and permissions (by default, the file will be owned by UID 0, i.e. `root`) - These configs reference top-level `configs` elements - The top-level configs can be declared as: - *external*, meaning that it is supposed to be created before you deploy the stack - referencing a file, whose content is used to initialize the config .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-auto, config ## Re-deploying Prometheus with a config - We will update the existing stack using `prometheus+config.yml` .exercise[ - Redeploy the `prometheus` stack: ```bash docker stack deploy -c prometheus+config.yml prometheus ``` - Check that Prometheus still works as intended (By connecting to any node of the cluster, on port 9090) ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-auto, config ## Accessing the config object from the Docker CLI - Config objects can be viewed from the CLI (or API) .exercise[ - List existing config objects: ```bash docker config ls ``` - View details about our config object: ```bash docker config inspect prometheus_prometheus ``` ] Note: the content of the config blob is shown with BASE64 encoding. <br/> (It doesn't have to be text; it could be an image or any kind of binary content!) .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom-auto, config ## Extracting a config blob - Let's retrieve that Prometheus configuration! .exercise[ - Extract the BASE64 payload with `jq`: ```bash docker config inspect prometheus_prometheus | jq -r .[0].Spec.Data ``` - Decode it with `base64 -d`: ```bash docker config inspect prometheus_prometheus | jq -r .[0].Spec.Data | base64 -d ``` ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Displaying metrics directly from Prometheus - This is easy ... if you are familiar with PromQL .exercise[ - Click on "Graph", and in "expression", paste the following: ``` sum by (container_label_com_docker_swarm_node_id) ( irate( container_cpu_usage_seconds_total{ container_label_com_docker_swarm_service_name="dockercoins_worker" }[1m] ) ) ``` - Click on the blue "Execute" button and on the "Graph" tab just below ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Building the query from scratch - We are going to build the same query from scratch - This doesn't intend to be a detailed PromQL course - This is merely so that you (I) can pretend to know how the previous query works <br/>so that your coworkers (you) can be suitably impressed (or not) (Or, so that we can build other queries if necessary, or adapt if cAdvisor, Prometheus, or anything else changes and requires editing the query!) .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Displaying a raw metric for *all* containers - Click on the "Graph" tab on top *This takes us to a blank dashboard* - Click on the "Insert metric at cursor" drop down, and select `container_cpu_usage_seconds_total` *This puts the metric name in the query box* - Click on "Execute" *This fills a table of measurements below* - Click on "Graph" (next to "Console") *This replaces the table of measurements with a series of graphs (after a few seconds)* .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Selecting metrics for a specific service - Hover over the lines in the graph (Look for the ones that have labels like `container_label_com_docker_...`) - Edit the query, adding a condition between curly braces: .small[`container_cpu_usage_seconds_total{container_label_com_docker_swarm_service_name="dockercoins_worker"}`] - Click on "Execute" *Now we should see one line per CPU per container* - If you want to select by container ID, you can use a regex match: `id=~"/docker/c4bf.*"` - You can also specify multiple conditions by separating them with commas .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Turn counters into rates - What we see is the total amount of CPU used (in seconds) - We want to see a *rate* (CPU time used / real time) - To get a moving average over 1 minute periods, enclose the current expression within: ``` rate ( ... { ... } [1m] ) ``` *This should turn our steadily-increasing CPU counter into a wavy graph* - To get an instantaneous rate, use `irate` instead of `rate` (The time window is then used to limit how far behind to look for data if data points are missing in case of scrape failure; see [here](https://www.robustperception.io/irate-graphs-are-better-graphs/) for more details!) *This should show spikes that were previously invisible because they were smoothed out* .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Aggregate multiple data series - We have one graph per CPU per container; we want to sum them - Enclose the whole expression within: ``` sum ( ... ) ``` *We now see a single graph* .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom ## Collapse dimensions - If we have multiple containers we can also collapse just the CPU dimension: ``` sum without (cpu) ( ... ) ``` *This shows the same graph, but preserves the other labels* - Congratulations, you wrote your first PromQL expression from scratch! (I'd like to thank [Johannes Ziemke](https://twitter.com/discordianfish) and [Julius Volz](https://twitter.com/juliusvolz) for their help with Prometheus!) .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom, snap ## Comparing Snap and Prometheus data - If you haven't set up Snap, InfluxDB, and Grafana, skip this section - If you have closed the Grafana tab, you might have to re-set up a new dashboard (Unless you saved it before navigating it away) - To re-do the setup, just follow again the instructions from the previous chapter .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom, snap ## Add Prometheus as a data source in Grafana .exercise[ - In a new tab, connect to Grafana (port 3000) - Click on the Grafana logo (the orange spiral in the top-left corner) - Click on "Data Sources" - Click on the green "Add data source" button ] We see the same input form that we filled earlier to connect to InfluxDB. .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom, snap ## Connecting to Prometheus from Grafana .exercise[ - Enter "prom" in the name field - Select "Prometheus" as the source type - Enter http://(IP.address.of.any.node):9090 in the Url field - Select "direct" as the access method - Click on "Save and test" ] Again, we should see a green box telling us "Data source is working." Otherwise, double-check every field and try again! .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom, snap ## Adding the Prometheus data to our dashboard .exercise[ - Go back to the the tab where we had our first Grafana dashboard - Click on the blue "Add row" button in the lower right corner - Click on the green tab on the left; select "Add panel" and "Graph" ] This takes us to the graph editor that we used earlier. .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom, snap ## Querying Prometheus data from Grafana The editor is a bit less friendly than the one we used for InfluxDB. .exercise[ - Select "prom" as Panel data source - Paste the query in the query field: ``` sum without (cpu, id) ( irate ( container_cpu_usage_seconds_total{ container_label_com_docker_swarm_service_name="influxdb"}[1m] ) ) ``` - Click outside of the query field to confirm - Close the row editor by clicking the "X" in the top right area ] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- class: prom, snap ## Interpreting results - The two graphs *should* be similar - Protip: align the time references! .exercise[ - Click on the clock in the top right corner - Select "last 30 minutes" - Click on "Zoom out" - Now press the right arrow key (hold it down and watch the CPU usage increase!) ] *Adjusting units is left as an exercise for the reader.* .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- ## More resources on container metrics - [Prometheus, a Whirlwind Tour](https://speakerdeck.com/copyconstructor/prometheus-a-whirlwind-tour), an original overview of Prometheus - [Docker Swarm & Container Overview](https://grafana.net/dashboards/609), a custom dashboard for Grafana - [Gathering Container Metrics](http://jpetazzo.github.io/2013/10/08/docker-containers-metrics/), a blog post about cgroups - [The Prometheus Time Series Database](https://www.youtube.com/watch?v=HbnGSNEjhUc), a talk explaining why custom data storage is necessary for metrics .blackbelt[DC17US: Monitoring, the Prometheus Way ([video](https://www.youtube.com/watch?v=PDxcEzu62jk&list=PLkA60AVN3hh-biQ6SCtBJ-WVTyBmmYho8&index=5))] .blackbelt[DC17EU: Prometheus 2.0 Storage Engine ([video](https://dockercon.docker.com/watch/NNZ8GXHGomouwSXtXnxb8P))] .debug[[swarm/metrics.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/metrics.md)] --- name: toc-whats-next class: title What's next? .nav[[Back to table of contents](#toc-chapter-1)] .debug[(automatically generated title slide)] --- class: title, extra-details # What's next? ## (What to expect in future versions of this workshop) .debug[[swarm/end.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/end.md)] --- class: extra-details ## Implemented and stable, but out of scope - [Docker Content Trust](https://docs.docker.com/engine/security/trust/content_trust/) and [Notary](https://github.com/docker/notary) (image signature and verification) - Image security scanning (many products available, Docker Inc. and 3rd party) - [Docker Cloud](https://cloud.docker.com/) and [Docker Datacenter](https://www.docker.com/products/docker-datacenter) (commercial offering with node management, secure registry, CI/CD pipelines, all the bells and whistles) - Network and storage plugins .debug[[swarm/end.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/end.md)] --- class: extra-details ## Work in progress - Demo at least one volume plugin <br/>(bonus points if it's a distributed storage system) - ..................................... (your favorite feature here) Reminder: there is a tag for each iteration of the content in the Github repository. It makes it easy to come back later and check what has changed since you did it! .debug[[swarm/end.md](https://github.com/jpetazzo/container.training/tree/lisa17t9/slides/swarm/end.md)] --- class: title That's all folks! <br/> Questions? .small[.small[ AJ ([@s0ulshake](https://twitter.com/s0ulshake)) — [@TravisCI](https://twitter.com/travisci) Jérôme ([@jpetazzo](https://twitter.com/jpetazzo)) — [@Docker](https://twitter.com/docker) ]] <!-- Tiffany ([@tiffanyfayj](https://twitter.com/tiffanyfayj)) AJ ([@s0ulshake](https://twitter.com/s0ulshake)) --> .debug[(inline)]